David vs. Goliath: Can Small Models Win Big with Agentic AI in Hardware Design?

By Shashwat Shankar 1, Subhranshu Pandey 1, Innocent Dengkhw Mochahari 1, Bhabesh Mali 1, Animesh Basak Chowdhury 2, Sukanta Bhattacharjee 1, Chandan Karfa 1

1 Indian Institute of Technology, Guwahati, India

2 NXP USA, Inc.

Abstract

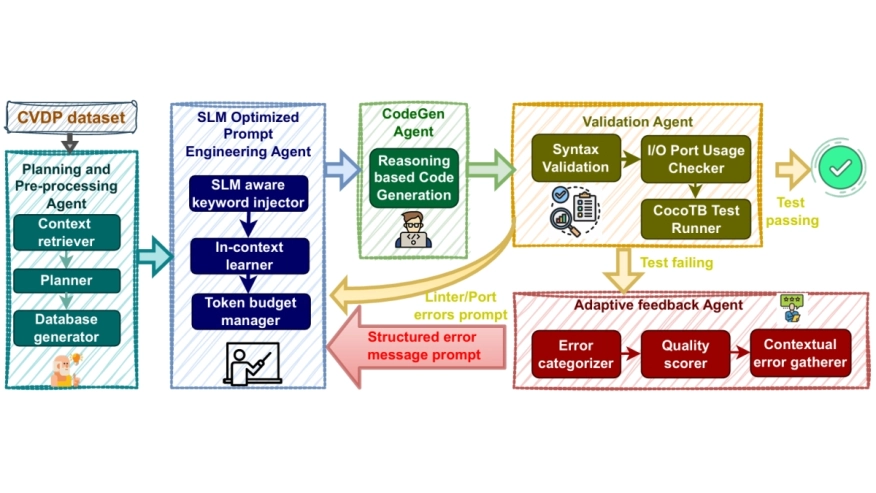

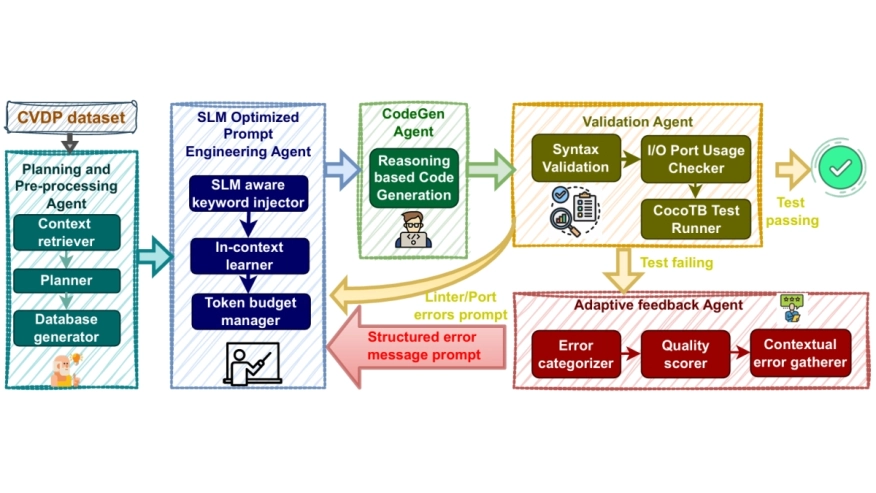

Large Language Model(LLM) inference demands massive compute and energy, making domain-specific tasks expensive and unsustainable. As foundation models keep scaling, we ask: Is bigger always better for hardware design? Our work tests this by evaluating Small Language Models coupled with a curated agentic AI framework on NVIDIA's Comprehensive Verilog Design Problems(CVDP) benchmark. Results show that agentic workflows: through task decomposition, iterative feedback, and correction - not only unlock near-LLM performance at a fraction of the cost but also create learning opportunities for agents, paving the way for efficient, adaptive solutions in complex design tasks.

Large Language Model(LLM) inference demands massive compute and energy, making domain-specific tasks expensive and unsustainable. As foundation models keep scaling, we ask: Is bigger always better for hardware design? Our work tests this by evaluating Small Language Models coupled with a curated agentic AI framework on NVIDIA's Comprehensive Verilog Design Problems(CVDP) benchmark. Results show that agentic workflows: through task decomposition, iterative feedback, and correction - not only unlock near-LLM performance at a fraction of the cost but also create learning opportunities for agents, paving the way for efficient, adaptive solutions in complex design tasks.

Keywords: AI assisted Hardware Design, Agentic AI, Large Language Model, Small Language Model, Benchmarking

To read the full article, click here

Related Semiconductor IP

- SpaceWire Node IP core

- nQrux Secure Boot

- 4K/8K Multiformat IP supporting AV2 decoder

- Ultra Ethernet MAC & PCS 100G/200G/400G/800G

- Ethernet PCS 100G/200G/400G/800G/1.6T

Related Articles

- AI, and the Real Capacity Crisis in Chip Design

- Optimizing Electronics Design With AI Co-Pilots

- The role of cache in AI processor design

- New PCIe Gen6 CXL3.0 retimer: a small chip for big next-gen AI

Latest Articles

- HSCO-Bench: An Agent-Driven End-to-End Hardware-Software Co-design Benchmark for Systems-on-Chip

- Taking Cryptography Out of the Data Path via Near-Memory Processing in DRAM

- Closer in the Gap: Towards Portable Performance on RISC-V Vector Processors

- TTP: A Hardware-Efficient Design for Precise Prefetching in Ray Tracing

- Heterogeneous SoC Integrating an Open-Source Recurrent SNN Accelerator for Neuromorphic Edge Computing on FPGA