AutoGNN: End-to-End Hardware-Driven Graph Preprocessing for Enhanced GNN Performance

By Seungkwan Kang 1, Seungjun Lee 1, Donghyun Gouk 2, Miryeong Kwon 2, Hyunkyu Choi 2, Junhyeok Jang 2, Sangwon Lee 2, Huiwon Choi 1, Jie Zhang 3, Wonil Choi 4, Mahmut Taylan Kandemir 5, Myoungsoo Jung 1,2

1 Computer Architecture and Memory Systems Laboratory, KAIST,

2 Panmnesia, Inc.,

3 Peking University,

4 Hanyang University,

5 Pennsylvania State University

Abstract

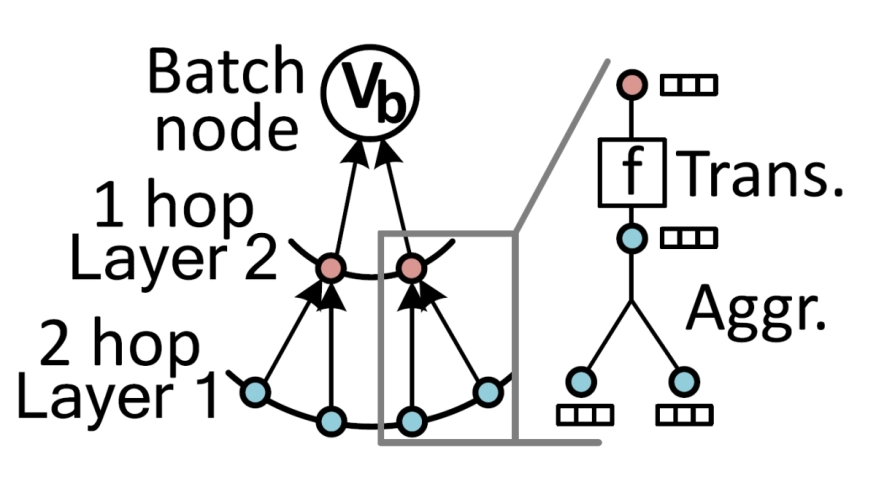

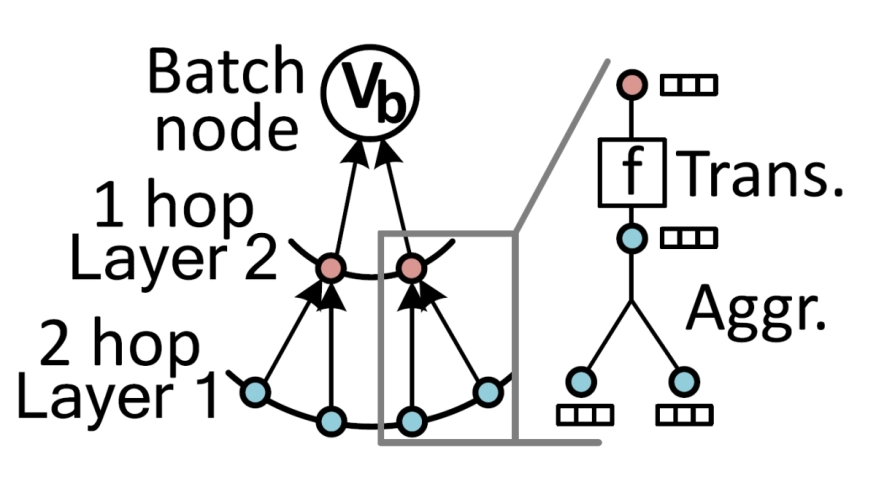

Graph neural network (GNN) inference faces significant bottlenecks in preprocessing, which often dominate overall inference latency. We introduce AutoGNN, an FPGA-based accelerator designed to address these challenges by leveraging FPGA’s reconfigurability and specialized components. AutoGNN adapts to diverse graph inputs, efficiently performing computationally intensive tasks such as graph conversion and sampling. By utilizing components like adder trees, AutoGNN executes reduction operations in constant time, overcoming the limitations of serialization and synchronization on GPUs.

Graph neural network (GNN) inference faces significant bottlenecks in preprocessing, which often dominate overall inference latency. We introduce AutoGNN, an FPGA-based accelerator designed to address these challenges by leveraging FPGA’s reconfigurability and specialized components. AutoGNN adapts to diverse graph inputs, efficiently performing computationally intensive tasks such as graph conversion and sampling. By utilizing components like adder trees, AutoGNN executes reduction operations in constant time, overcoming the limitations of serialization and synchronization on GPUs.

AutoGNN integrates unified processing elements (UPEs) and single-cycle reducers (SCRs) to streamline GNN preprocessing. UPEs enable scalable parallel processing for edge sorting and unique vertex selection, while SCRs efficiently handle sequential tasks such as pointer array construction and subgraph reindexing. A user-level software framework dynamically profiles graph inputs, determines optimal configurations, and reprograms AutoGNN to handle varying workloads. Implemented on a 7nm enterprise FPGA, AutoGNN achieves up to 9.0× and 2.1× speedup compared to conventional and GPU-accelerated preprocessing systems, respectively, enabling high-performance GNN preprocessing across diverse datasets.

To read the full article, click here

Related Semiconductor IP

- Low Dropout (LDO) Regulator

- 16-Bit xSPI PSRAM PHY

- ASIL B Compliant MIPI CSI-2 CSE2 Security Module

- SHA-256 Secure Hash Algorithm IP Core

- EdDSA Curve25519 signature generation engine

Related Articles

- Boosting RISC-V SoC performance for AI and ML applications

- CANDoSA: A Hardware Performance Counter-Based Intrusion Detection System for DoS Attacks on Automotive CAN bus

- Modeling and Optimizing Performance Bottlenecks for Neuromorphic Accelerators

- ChipBench: A Next-Step Benchmark for Evaluating LLM Performance in AI-Aided Chip Design

Latest Articles

- A 129FPS Full HD Real-Time Accelerator for 3D Gaussian Splatting

- SkipOPU: An FPGA-based Overlay Processor for Large Language Models with Dynamically Allocated Computation

- TensorPool: A 3D-Stacked 8.4TFLOPS/4.3W Many-Core Domain-Specific Processor for AI-Native Radio Access Networks

- Assertain: Automated Security Assertion Generation Using Large Language Models

- VolTune: A Fine-Grained Runtime Voltage Control Architecture for FPGA Systems