Combined CapEx of Top Eight CSPs to Exceed $710 Billion in 2026; Google Leads ASIC Deployment with TPUs

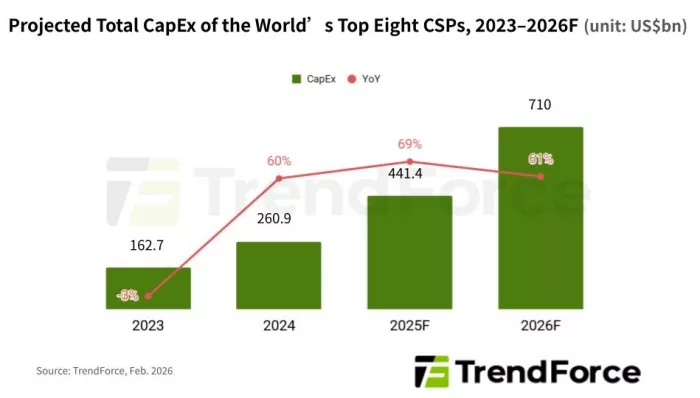

February 25, 2026 -- Global CSPs are accelerating investment in AI servers and infrastructure to support expanding AI deployment and upgrades, according to TrendForce’s latest findings on the AI server market. Combined capital expenditures by the world’s eight leading CSPs—Google, AWS, Meta, Microsoft, Oracle, Tencent, Alibaba, and Baidu— are projected to exceed $710 billion in 2026, representing approximately 61% YoY growth.

In addition to the continued procurement of NVIDIA and AMD GPU platforms, CSPs are increasingly investing in ASICs to optimize AI workload suitability and improve the cost efficiency of data centers.

TrendForce estimates that Alphabet, Google’s parent company, will see its 2026 capital expenditure surpass $178.3 billion, up 95% YoY. Google began developing in-house ASICs earlier than its peers and has accumulated substantial advantages in research and development. Its TPU roadmap is expected to transition to the next-generation v8 platform this year.

Driven by demand from the Google Cloud Platform and Gemini AI applications, TPUs are projected to account for nearly 78% of AI servers shipped to Google in 2026. This is expected to further widen the gap with GPU-based systems. Google remains the only CSP whose AI server build-out features more ASIC-based servers than GPU-based ones.

Amazon has recently increased procurement of NVIDIA GB300 and V200 rack-scale systems, reflecting accelerated deployment of higher-power, higher-density GPU platforms to support expanding AI training and inference services. GPUs are expected to represent nearly 60% of AWS’s AI server build-out in 2026.

On the ASIC front, Amazon’s next-generation Trainium 3 is expected to ramp starting 2Q26, following the rollout of Trainium 2/2.5. However, shipment momentum may become more pronounced in the second half of the year as software maturity and system validation progress.

TrendForce projects Meta’s 2026 CapEx to exceed $124.5 billion, up 77% YoY. Meta’s AI servers will continue to rely primarily on NVIDIA and AMD GPUs, with GPU-based systems accounting for over 80% of its build-out.

Although Meta aims to advance its in-house MTIA ASIC platform to lower unit compute costs and reduce supplier dependence, supply chain sources indicate that software-hardware tuning challenges may constrain actual shipment volumes relative to initial expectations.

Microsoft remains optimistic about long-term demand for large-scale model training and inference and continues to procure NVIDIA rack-scale systems to support AI server deployments. It has also introduced its in-house Maia 200 chip, targeting high-efficiency AI inference applications.

Meanwhile, Oracle is expanding GPU rack-scale deployments to support AI data center projects tied to initiatives such as Stargate and OpenAI.

On the Chinese side, while ByteDance has not publicly disclosed detailed 2026 CapEx plans, TrendForce estimates that over half of its investment will be allocated to procuring AI chips. NVIDIA H200 is expected to serve as a key solution for ByteDance’s AI servers, subject to U.S.-China regulatory developments. The company is also expanding the adoption of domestic AI chips, including solutions from Cambricon.。

Tencent continues to procure NVIDIA GPUs to support cloud and generative AI services, while collaborating with local partners to develop in-house ASIC solutions across networking, data center infrastructure, and online AI applications to diversify compute sources and enhance integration flexibility.

Alibaba and Baidu are both actively advancing proprietary ASIC development. Alibaba, through T-head and Alibaba Cloud, supports public cloud and AI infrastructure services while developing Qwen LLMs and application software for enterprise and consumer markets.

Baidu plans to roll out its next-generation Kunlun chips after 2026, targeting large-scale AI training and inference workloads. In parallel, it is advancing its Tianchi AI server cluster platform, capable of linking hundreds of AI chips to boost system-level computing power.

For more information on reports and market data from TrendForce’s Department of Semiconductor Research, please click here, or email the Sales Department at SR_MI@trendforce.com

Related Semiconductor IP

- SHA-256 Secure Hash Algorithm IP Core

- EdDSA Curve25519 signature generation engine

- DeWarp IP

- 6-bit, 12 GSPS Flash ADC - GlobalFoundries 22nm

- LunaNet AFS LDPC Encoder and Decoder IP Core

Related News

- CSP CapEx to Soar Past US$520 Billion in 2026, Driven by GPU Procurement and ASIC Development

- Chartered still losing money, cuts capex

- ST to 're-deploy' 1,000 engineers amid Q1 losses, CapEx cuts

- Expected Top Ten IC Industry Capex Spenders In 2010

Latest News

- Rebellions Collaborates with SK Telecom and Arm Targeting Sovereign AI and Telecom Infrastructure

- Sarcina Launches UCIe-A/S Packaging IP to Accelerate Chiplet Architectures

- BrainChip Unveils Radar Reference Platform to Bridge the ‘Identification Gap’ in Edge AI

- Siemens accelerates AI chip verification to trillion‑cycle scale with NVIDIA technology

- SiFive Raises $400 Million to Accelerate High-Performance RISC-V Data Center Solutions; Company Valuation Now Stands at $3.65 Billion