NNA IP

Filter

Compare

8

IP

from

5

vendors

(1

-

8)

-

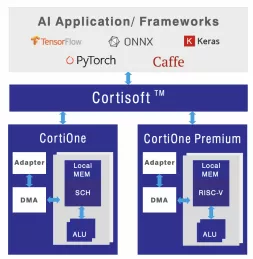

AI Processor Accelerator

- Universal Compatibility: Supports any framework, neural network, and backbone.

- Large Input Frame Handling: Accommodates large input frames without downsizing.

-

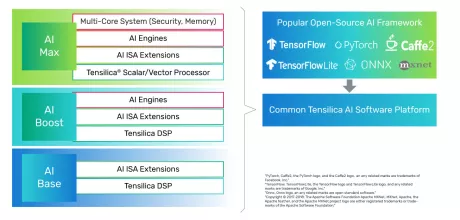

Tensilica AI Max - NNA 110 Single Core

- Scalable Design to Adapt to Various AI Workloads

- Efficient in Mapping State-of-the-Art DL/AI Workloads

- End-to-End Software Toolchain for All Markets and Large Number of Frameworks

-

High Performance GPU for premium DTVs

- 10bits RGBA / YUV for HDR experiences

- Advanced image compression technology

- 4xMSAA for smoother image outlines

-

Efficient GPU ideal for integrating into smart home hubs, set-top boxes or mainstream DTVs

- 10bits RGBA / YUV for HDR experiences

- Advanced image compression technology

- 4xMSAA for smoother image outlines

- Fully secure GPU virtualisation with HyperLane

-

Smallest GPU to support native HDR applications, suitable for wearable devices, smart home hubs, or mainstream set-top boxes

- 10bits RGBA / YUV for HDR experiences

- Advanced image compression technology

- Fully secure GPU virtualisation with HyperLane

-

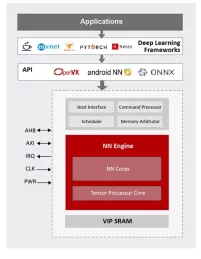

NPU IP for Wearable and IoT Market

- ML inference engine for deeply embedded system

NN Engine

Supports popular ML frameworks

Support wide range of NN algorithms and flexible in layer ordering

- ML inference engine for deeply embedded system

-

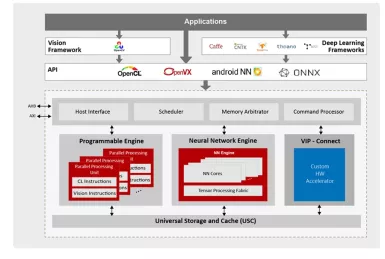

NPU IP for AI Vision and AI Voice

- 128-bit vector processing unit (shader + ext)

- OpenCL 3.0 shader instruction set

- Enhanced vision instruction set (EVIS)

- INT 8/16/32b, Float 16/32b

-

Power efficient, high-performance neural network hardware IP for automotive embedded solutions

- Power efficient, high-performance

- For automotive embedded solutions