Overview

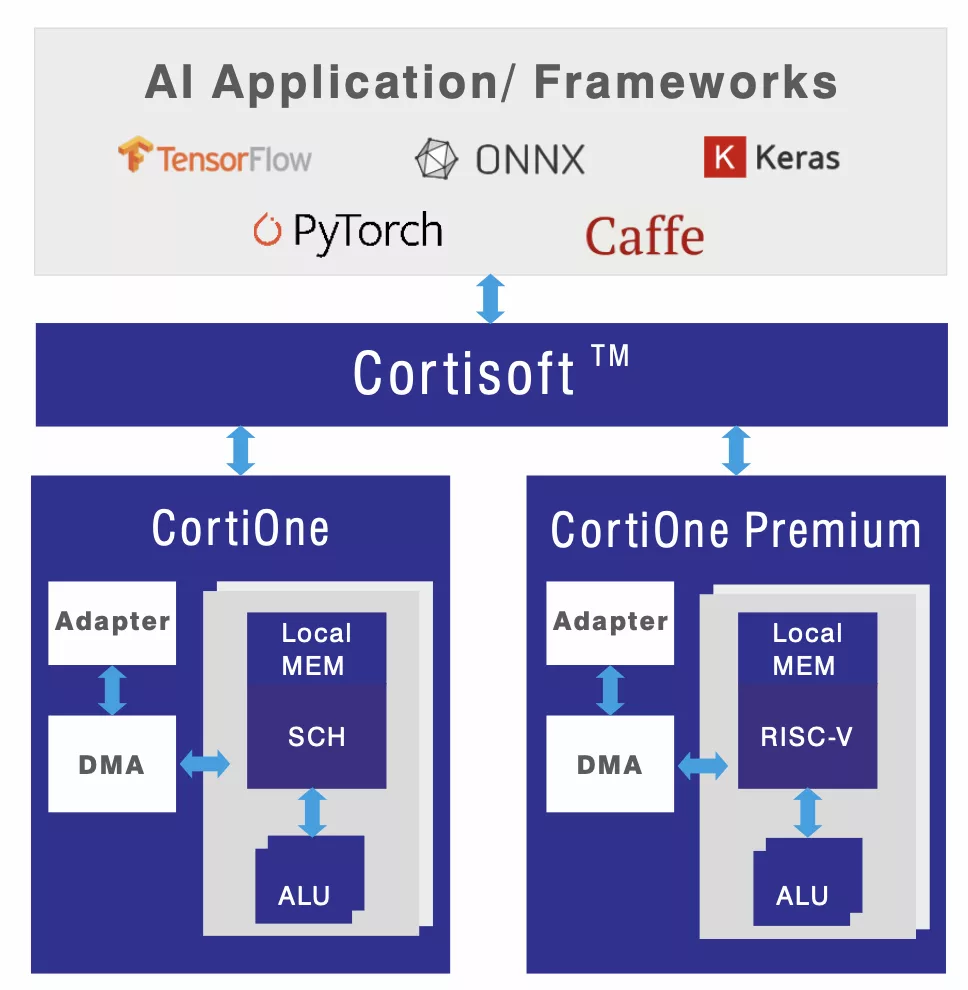

Introducing Gyrus's ground-breaking AI Processor Accelerator IP, coupled with a native graph processing software stack, is the ultimate solution for seamless Neural Network implementation. We have cracked the code on scalability, programmability, and power consumption with a tightly integrated Hardware & Software approach.

The secret to our success lies in our efficient utilisation of compute elements and intelligent memory reuse through reinforcement learning based software, ensuring a seamless ?ow of data to the compute engines with minimal cycles unused. With Gyrus's compilers and software tools, you can effortlessly port any neural network to our hardware accelerator, unlocking exceptional efciency, even with substantial activations and weights.

Our compilers streamline hardware con?guration, reducing SOC complexity and power consumption while enabling AI algorithms to run smoothly on edge devices. The scheduler is a neural scheduler search based on Reinforcement learning. With a cycle-accurate C-Model, we create a Digital Twin of the NNA IP, ensuring long-term model deployment efciency. Elevate your edge device capabilities with Cortisoft from Gyrus!

Learn more about Edge AI Accelerator IP core

While lightweight architectures like MobileNetV2 employ Depthwise Separable Convolutions (DSC) to reduce computational complexity, their multi-stage design introduces a critical performance bottleneck inherent to layer-by-layer execution: the high energy and latency cost of transferring intermediate feature maps to either large on-chip buffers or off-chip DRAM. To address this memory wall, this paper introduces a novel hardware accelerator architecture that utilizes a fused pixel-wise dataflow.

A look at Kinara’s accelerator and NXP processors which combine to deliver edge AI performance capable of delivering smart camera designs

In an ever-changing technology landscape, USB (Universal Serial Bus) has been a cornerstone since its inception in the mid-1990s. Initially designed to simplify the connection of peripherals to personal computers, USB has undergone significant transformations to meet the growing demands for faster data transfer rates, improved power delivery, and enhanced versatility.

Enter the Multi-Protocol SerDes (Serializer/Deserializer)—a flexible, reusable IP block that allows a single PHY to support multiple serial communication protocols, such as PCIe, SATA, Ethernet, USB, and more. This approach enables SoC vendors to meet diverse customer requirements and application needs without redesigning I/O for each target market.

Kurt Shuler, Arteris

William Ruby, Synopsys