Computer Vision DSP IP

Filter

Compare

17

IP

from

4

vendors

(1

-

10)

-

Vision AI DSP

- Ceva-SensPro is a family of DSP cores architected to combine vision, Radar, and AI processing in a single architecture.

- The silicon-proven cores provide scalable performance to cover a wide range of applications that combine vision processing, Radar/LiDAR processing, and AI inferencing to interpret their surroundings. These include automotive, robotics, surveillance, AR/VR, mobile devices, and smart homes.

-

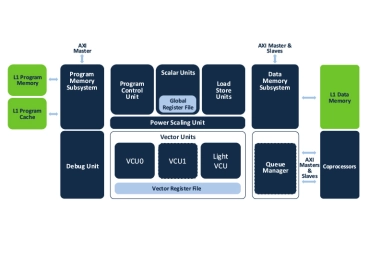

256 8-bit-MAC DSP core

- High performance vector signal processing and efficient control code processing

- 256 8-bit macs, or 128 16-bit macs, or 32-bit macs per cycle

- Flexible vector permute operations

- Maskable vector lanes

-

32 8-bit-MAC Vector DSP Core

- High performance vector signal processing and efficient control code processing

- 32 8-bit macs, or 16 16-bit macs, or 8 32-bit macs per cycle

- Flexible vector permute operations

- Maskable vector lanes

-

128 8-bit-MAC Vector DSP Core

- High performance vector signal processing and efficient control code processing

- 256 8-bit macs, or 128 16-bit macs, or 32-bit macs per cycle

- Flexible vector permute operations

- Maskable vector lanes

-

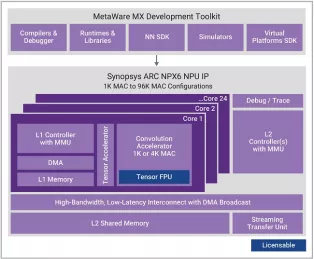

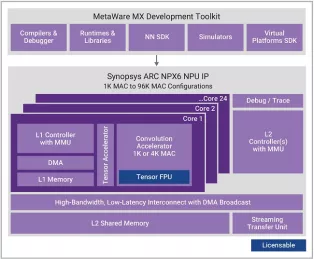

Optional extension of NPX6 NPU tensor operations to include floating-point support with BF16 or BF16+FP16

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator

-

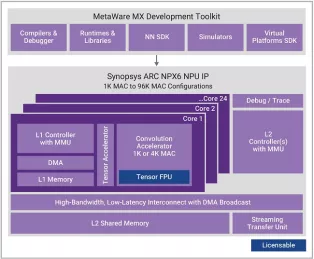

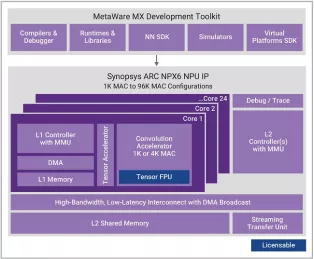

Enhanced Neural Processing Unit providing 98,304 MACs/cycle of performance for AI applications

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator

-

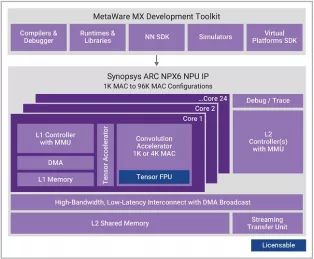

Enhanced Neural Processing Unit providing 8,192 MACs/cycle of performance for AI applications

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator

-

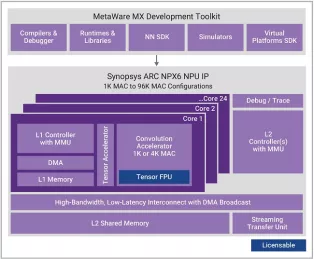

Enhanced Neural Processing Unit providing 65,536 MACs/cycle of performance for AI applications

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator

-

Enhanced Neural Processing Unit providing 49,152 MACs/cycle of performance for AI applications

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator

-

Enhanced Neural Processing Unit providing 4096 MACs/cycle of performance for AI applications

- Scalable real-time AI / neural processor IP with up to 3,500 TOPS performance

- Supports CNNs, transformers, including generative AI, recommender networks, RNNs/LSTMs, etc.

- Industry leading power efficiency (up to 30 TOPS/W)

- One 1K MAC core or 1-24 cores of an enhanced 4K MAC/core convolution accelerator