AIoT processor with vector computing engine

I805 utilizes a 4-stage sequential pipeline, and is equipped with a vector computing engine oriented to applications such as AI a…

Overview

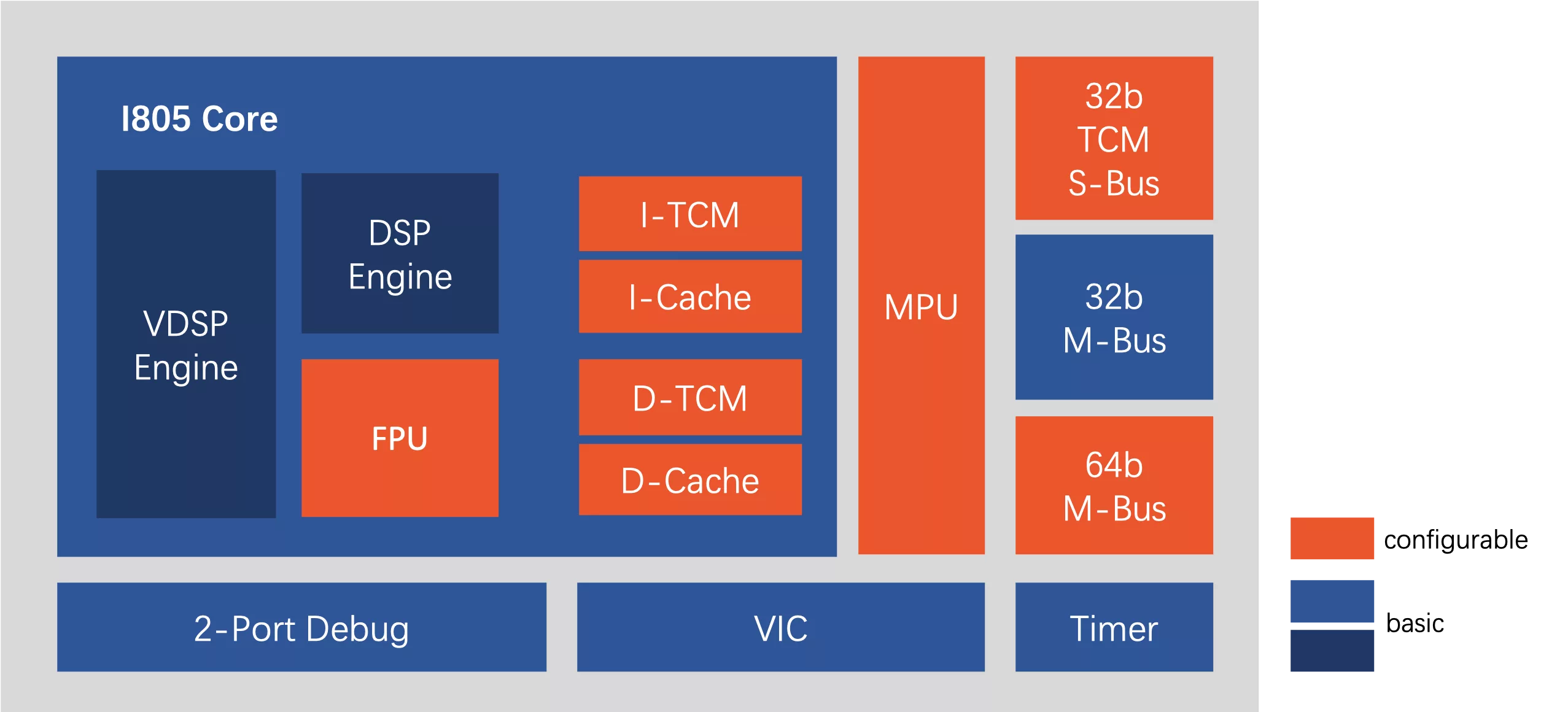

I805 utilizes a 4-stage sequential pipeline, and is equipped with a vector computing engine oriented to applications such as AI and DSP. It is designed with low-latency tightly coupled memory (TCM) to ensure excellent data throughput efficiency. It is suitable for application fields with certain requirements on computing power, such as audio codec, voice processing, and lightweight deep learning networks.

Key features

- Instruction set: T-Head ISA (32-bit/16-bit variable-length instruction set);

- Pipeline: 4-stage sequential pipeline;

- General register: 32 32-bit GPRs; 16 128-bit VGPRs;

- Cache: I-Cache: 8 KB/16 KB/32 KB/64 KB (size options); D-Cache: 8 KB/16 KB/32 KB/64 KB (size options);

- Tightly-coupled memory (TCM): I-TCM: 4 KB to 1 MB (size options); D-TCM: 4 KB to 1 MB (size options);

- Tightly-coupled memory slave interface: Independent TCM bus slave interface;

- Bus interface: Dual bus (system bus + peripheral bus);

- Memory protection: 0 to 8 optional protection zones;

- Scalar computing engine: 32-bit operation width;

- Vector computing engine: 128-bit operation width;

- Tight coupling IP: Interrupt controller and timer;

- Floating point engine: Optional single-precision floating point unit;

- Vector calculation engine: Improves computing parallelism, and accelerates application scenarios like AI;

- Low-latency tightly-coupled memory: Expands memory bandwidth, and adapts to data-intensive computing scenarios;

- High-performance unaligned memory access: Accelerates unaligned memory access, and adapts to DSP applications.

Block Diagram

Applications

- Speech Recognition;

- Smart Home Appliances.

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about Vector Processor IP core

MultiVic: A Time-Predictable RISC-V Multi-Core Processor Optimized for Neural Network Inference

Scalable IP Core of Vector Stream Cipher

Integrating eFPGA for Hybrid Signal Processing Architectures

FeNN-DMA: A RISC-V SoC for SNN acceleration

Design guidelines for embedded real time face detection application

Frequently asked questions about Vector Processor IP cores

What is AIoT processor with vector computing engine?

AIoT processor with vector computing engine is a Vector Processor IP core from T-Head listed on Semi IP Hub.

How should engineers evaluate this Vector Processor?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Vector Processor IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.