Arm and Google Cloud redefine agentic AI infrastructure with Axion processors

Purpose-built Arm CPUs for Google Cloud underpin the latest generation of Google TPUs and enable cost-efficient, high-throughput agent orchestration with GKE Agentic Sandbox

Google Cloud is taking a major step toward operationalizing agentic AI at scale with multiple updates, including new TPU 8t and TPU 8i systems as well as the introduction of its Agent Sandbox on Google Kubernetes Engine (GKE), a purpose-built deployment framework designed to run complex, multi-step AI systems efficiently and securely. This new agentic infrastructure is built on Axion, Google’s Arm Neoverse-based CPU, which underscores a crucial shift toward purpose-built CPU architectures for next-generation AI workloads.

As agentic AI moves from experimentation to production, the infrastructure requirements are changing. Unlike traditional inference, which relies on single model calls, agentic systems orchestrate continuous chains of reasoning, tool use and real-time data retrieval. This dramatically increases concurrency, latency sensitivity and overall compute demand, placing the CPU firmly on the critical path to success.

As agentic AI moves from experimentation to production, the infrastructure requirements are changing. Unlike traditional inference, which relies on single model calls, agentic systems orchestrate continuous chains of reasoning, tool use and real-time data retrieval. This dramatically increases concurrency, latency sensitivity and overall compute demand, placing the CPU firmly on the critical path to success.

This is where Arm-based infrastructure stands apart. Built for high-throughput, energy-efficient compute, Arm Neoverse platforms – and in this case specifically Google Axion – have emerged as the foundation for scalable agentic AI deployments.

Agentic AI at Scale: Axion leads the pack

Google Cloud’s announcement of eighth-generation TPU systems builds on its strong legacy of custom silicon design. This generation introduces a divergence for training and inference applications with the TPU 8t and TPU 8i variants, and, for the first time, it integrates Google Axion CPU as the header. That reduces data preparation latency to ensure the TPU engines stay fully utilized and never stall.

TPU isn’t the end of the story though; Google Cloud is pursuing a co-designed ‘AI Hypercomputer’ vision, and equally important is the introduction of the GKE Agent Sandbox, which offers scalable and low-latency infrastructure designed for agents to safely execute untrusted code and tool calls without sacrificing performance. With Google Axion, you can build agents on leading infrastructure without compromising on cost or choice.

GKE Agent Sandbox running on Google Axion processors and built on gVisor with Kata Containers support delivers up to:

- 300 sandboxes per second per cluster at

- <1s time-to-first-instruction latency

Maintaining this level of sandbox throughput and low-latency execution puts continuous pressure on the underlying infrastructure. As agentic AI becomes a default deployment pattern, the infrastructure beneath it must keep pace with delivering the throughput, responsiveness, and efficiency required to run agentic workloads reliably at scale. Axion is designed to meet that demand.

And as these agentic systems expand, the efficiency of inference becomes critical as well. Without efficient inference, agents cannot function; without agentic orchestration, inference remains underutilized. By landing both critical tasks on CPU-based infrastructure organizations can scale intelligent systems with strong performance while maintaining cost.

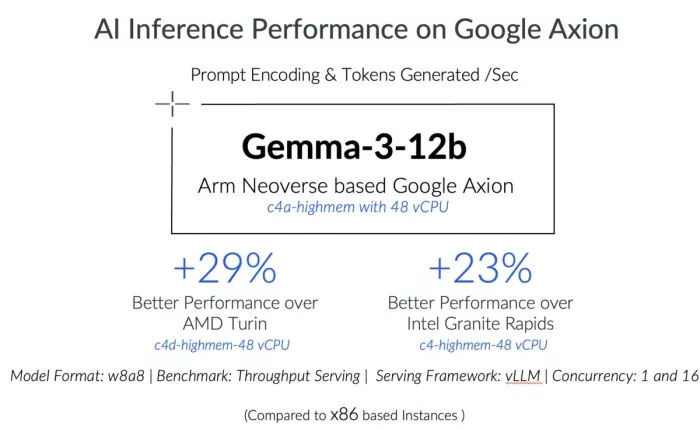

AI inference on Axion: Performance that changes the economics

C4A VMs, powered by Arm Neoverse V2 based Axion CPUs, are optimized to complement pure-play accelerators in handling these parallel, latency-sensitive workloads efficiently by enabling high-throughput AI inference on general-purpose compute.

These capabilities are already becoming evident in production environments. loveholidays, the European online travel platform, runs large-scale embedding and inference workloads across petabytes of data, where accelerator-based approaches can be cost-prohibitive at scale.

“As a business, we are growing our token appetite faster than our budget allows,” said Dimitri Lerko, Head of Engineering, loveholidays. “Running large-scale embedding and inference workloads on GPUs is cost-prohibitive at our data volumes, so maximizing CPU efficiency is critical. Leveraging Axion family of C4A and N4A VMs gives us the price-performance headroom to build real-time AI decision-making pipelines with bespoke and open-source model inference using CPUs — something that simply wasn’t viable before.”

In our testing, C4A consistently outperforms current-generation x86 instances across a range of AI inference workloads:

Extending the Axion portfolio

For workloads that require greater control, the Axion family extends to C4A Metal, a native bare-metal instance (in preview) that brings the same Arm architecture from cloud to edge. It enables consistent development, validation, and deployment across environments, with direct hardware access and no hypervisor overhead for deterministic performance. This is ideal for demanding use cases like automotive vHIL, native Android CI/CD, and specialized enterprise infrastructure requiring greater control, performance, and architectural consistency.

“At Panasonic, we’re building next-generation in-vehicle experiences across the cloud and the car,” said Andrew Poliak, Chief Technology Officer, Panasonic Automotive Systems America, LLC. “During the preview of C4A Metal instances, we used a bare-metal Arm environment that matches our edge architecture, enabling teams to develop, test and validate automotive applications on a single, consistent platform. This allows us to move from cloud to vehicle with bit parity – running the same binaries in both environments – without– architectural compromise.”

Alongside this, N4A, the most recent addition to the Axion family, provides a cost-efficient foundation for scale-out workloads such as web services, APIs, and data pipelines.

Together, C4A, C4A Metal, and N4A form a unified, workload-optimized compute addressing AI inference to scale-out applications, and spans cloud to edge, enabling teams to optimize for both performance and cost on Arm.

A leading ecosystem for Arm-first deployment

Arm now underpins one of the industry’s largest and fastest-growing software ecosystem, driving the shift to Arm-first computing across cloud and edge. Google is already running production services such as YouTube, Gmail, BigQuery, Spanner, Bigtable, Google Earth Engine, Google Compute Engine, GKE Dataflow, Cloud Batch, among others, on Axion processors, and has migrated more than 30,000 internal applications across its production environment.

For organizations beginning their migration, Arm’s Cloud Migration Resource Hub provides 100+ Learning Paths covering common workload patterns on Google Axion. Across the Neoverse ecosystem, the Arm Software Ecosystem Dashboard tracks validated software and recommended versions, while adherence to SystemReady VE standards ensures seamless software interoperability from day one. Leading ISVs including Elastic, MongoDB, Palo Alto Networks, Redis Labs, and Couchbase are fully validated on Axion-based infrastructure.

Get started with Google Axion

Whether deploying agentic workloads with GKE Agent Sandbox, optimizing inference on C4A or scaling general-purpose compute with N4A, Axion provides a consistent, Arm-based foundation for modern AI infrastructure.

Get Started with Google Axion on Google Cloud

Additional Resources:

Related Semiconductor IP

- UCIe D2D Adapter & PHY Integrated IP

- Low Dropout (LDO) Regulator

- 16-Bit xSPI PSRAM PHY

- MIPI CSI-2 CSE2 Security Module

- ASIL B Compliant MIPI CSI-2 CSE2 Security Module

Related Blogs

- How Google and Arm Collaborate on the Next Wave of Cloud Infrastructure

- Improve Apache httpd Performance up to 40% by deploying on Alibaba Cloud Yitian 710 instances

- How Standards Are Unleashing the Power of DPUs for Cloud Computing

- Arm-based Cloud Instances Outperform x86 Instances by up to 64% on VP9 Encoding

Latest Blogs

- Arm and Google Cloud redefine agentic AI infrastructure with Axion processors

- A Bench-to-In-Field Telemetry Platform for Datacenter Power Management

- IDS-Verify™: From Specification to Sign-Off – Automated CSR, Hardware Software Interface and CPU-Peripheral Interface Verification

- RISC-V and GPU Synergy in Practice: A Path Towards High-Performance SoCs from SpacemiT K3

- EDA AI Agents: Intelligent Automation in Semiconductor & PCB Design