Rambus Sets New Benchmark for AI Memory Performance with Industry-Leading HBM4E Controller IP

- Built on a proven track record of over one hundred HBM design wins to ensure first-time silicon success

- Delivers up to 16 Gigabits per second per pin at low latency to meet the demands of next-generation AI and High-Performance Computing (HPC) workloads

- Expands industry-leading silicon IP portfolio of high-performance memory solutions

SAN JOSE, Calif. – March 4, 2026 – Rambus Inc. (NASDAQ: RMBS), a premier chip and silicon IP provider making data faster and safer, today announced the industry’s leading HBM4E Memory Controller IP, extending its market leadership in HBM IP. This new solution delivers breakthrough performance with advanced reliability features enabling designers to address the demanding memory bandwidth requirements of next-generation AI accelerators and graphics processing units (GPUs).

“Given the insatiable bandwidth demands of AI, it’s imperative for the memory ecosystem to continue aggressively advancing memory performance,” said Simon Blake-Wilson, SVP and general manager of Silicon IP, at Rambus. “As a leading silicon IP provider for AI applications, we are bringing the industry’s leading HBM4E Controller IP solution to the market as a key enabler for breakthrough performance in next-generation AI processors and accelerators.”

“HBM4E represents a significant milestone for HBM technology, delivering unprecedented performance for advanced AI and HPC workloads,” said Ben Rhew, corporate vice president and the head of the Foundry IP Development Team at Samsung Electronics. “HBM4E IP solutions will be essential for broad industry adoption, and Samsung looks forward to collaborating closely with Rambus and the wider ecosystem to drive innovation in AI.”

Explore HBM IP:

“HBM bandwidth is one of the main bottlenecks on LLM performance, and we’re excited by efforts across the industry to push it further,” said Reiner Pope, co-founder and CEO at MatX.

“AI processors and accelerators need high-performance, high-density HBM memory for the massive computational requirements of AI workloads,” said Soo Kyoum Kim, program associate vice president, Memory Semiconductors at IDC. “As the requirements of AI processors and accelerators continue their rapid rise, HBM solutions must advance apace. HBM4E IP reaching the market now will be an essential building block for designers of cutting-edge AI hardware.”

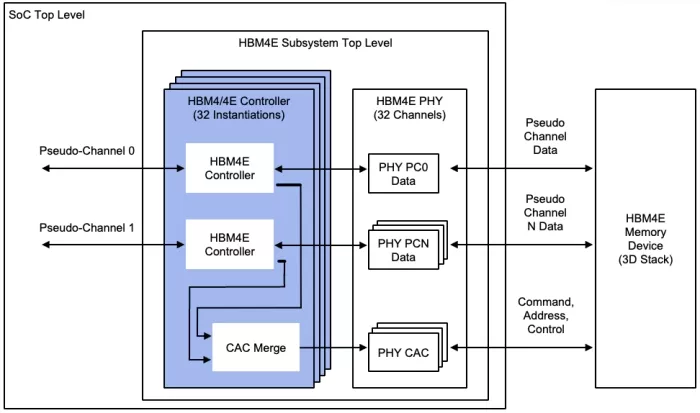

Figure 1: Rambus HBM4E Controller

Rambus HBM4E Controller IP Features:

The Rambus HBM4E Controller enables a new generation of HBM memory deployments for cutting-edge AI accelerators, graphics and HPC applications. The HBM4E Controller is capable of supporting operation up to 16 Gigabits per second (Gbps) per pin providing an unprecedented throughput of 4.1 Terabytes per second (TB/s) to each memory device. For an AI accelerator with eight attached HBM4E devices, this translates to over 32 TB/s of memory bandwidth for next-generation AI workloads. The Rambus HBM4E Controller IP can be paired with third-party standard or TSV PHY solutions to instantiate a complete HBM4E memory subsystem in a 2.5D or 3D package as part of an AI SoC or custom base die solution.

Availability and More Information:

The Rambus HBM4E Controller IP is the latest addition to the Rambus leading-edge portfolio of digital controller solutions. The HBM4E Controller is available for licensing, and early access design customers can engage today.

Learn more about the Rambus HBM4E Controller IP at https://www.rambus.com/interface-ip/hbm/.

Related Semiconductor IP

- HBM4E Controller IP

- HBM 4 Verification IP

- Verification IP for HBM

- Simulation VIP for HBM

- HBM Synthesizable Transactor

Related News

- Rambus Unveils HBM4E Controller: 16 GT/s, 2,048-Bit Interface, Enabling C-HBM4E

- Rambus Boosts AI Performance with 9.6 Gbps HBM3 Memory Controller IP

- Rambus Advances AI 2.0 with GDDR7 Memory Controller IP

- Rambus Announces Industry-First HBM4 Controller IP to Accelerate Next-Generation AI Workloads

Latest News

- QuickLogic to Showcase EOS™ S3 and eFPGA Solutions at Sensors Converge

- Rambus Introduces PCIe® 7.0 Switch IP with Time Division Multiplexing for Scalable AI and Data Center Infrastructure

- Siemens hardware-assisted verification validates Arm AGI CPU for scalable agentic AI

- GUC Monthly Sales Report - April 2026

- QuantWare Raises $178 Million to Build World’s Most Powerful Quantum Processors at an Industrial Scale