Overview

Accelerate Edge AI Innovation

AI data-processing workloads at the edge are already transforming use cases and user experiences. The third-generation Ethos NPU helps meet the needs of future edge AI use cases.

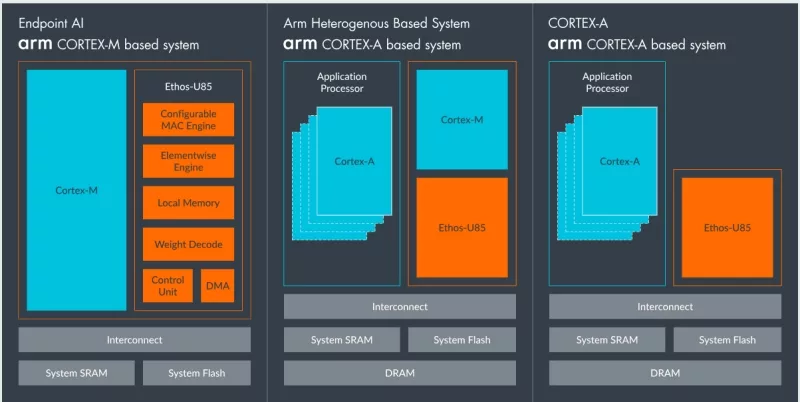

The Ethos-U85 offers support for transformer-based models at the edge, the foundation for newer language and vision models, scales from 128 to 2048 MAC units, and is 20% more energy efficient than Arm Ethos-U55 and Arm Ethos-U65, enabling higher performance edge AI use cases in a sustainable way. Offering the same toolchain as previous Ethos-U generations, partners can benefit from seamless migration and leverage investments in Arm-based machine learning (ML) tools.

Learn more about NPU IP core

Is your NPU DOOMed? Quadric's Chimera GPNPU runs every AI model — and a complete DOOM engine. Find out why Quadric is different.

At Quadric, we have long argued that heterogeneous NPU designs — those that stitch together multiple specialized fixed-function engines — carry an unavoidable hidden cost: data has to move. A lot. And data movement burns power, adds latency, and creates silicon-area overhead that scales with every new generation of AI models. Now, Intel has made that case for us.

The IP industry is no stranger to boom and bust cycles, and it looks to be at the crest of another wave.

AI is evolving faster than the chips designed to run it. Models like large language transformers and generative networks are shifting rapidly–while silicon development cycles remain long and rigid. Traditional NPUs, built around proprietary instruction sets and opaque compilers, simply can’t keep up.

Just bolting a matrix accelerator onto existing processor IP leads to long-term challenges.