Teaching AI Agents to Speak Hardware

AI agents hallucinate when they hit domain-specific silicon knowledge like the Chimera GPNPU. We built an MCP server to address that. Here's what we've learned about where MCP works well and where it gets complicated.

✨> What is "trange" used for on the quadric gpnpu?

I wasn't able to find any public reference to "trange" as a specific term in the Quadric Chimera GPNPU architecture or

SDK. It doesn't appear in their product pages, press releases, or technical blog posts that are publicly available.

There's a predictable failure mode with AI agents and specialized hardware stacks. Ask something general and you get a sharp, accurate answer. Ask something specific to our toolchain — "What does MinROI mean in this log?" "Which model handles depthwise separable convolutions on this target?" — and the agent confidently tells you something wrong.

This isn't a flaw in the AI. It's a knowledge boundary. Language models know public research and open-source frameworks. They don't know our silicon architecture, our compiler's output format, or the domain vocabulary our team spent years developing.

This isn't a flaw in the AI. It's a knowledge boundary. Language models know public research and open-source frameworks. They don't know our silicon architecture, our compiler's output format, or the domain vocabulary our team spent years developing.

The fix is a live connection between the agent and the knowledge it's missing.

Using MCP to give AI Agents Access to Live Data

We decided to build our own MCP server to address the gap in domain-specific knowledge. The impact on day-to-day work has been immediate and measurable.

Since we implemented this, my bugging of the FAE or factory engineering teams to explain terms or concepts, understand product related decisions and prioritization, and generally pester them with questions has been reduced dramatically, giving them back time to do their actual jobs. And because it's available 24/7 I don't have to worry about delays induced by travel, availability, sleep, or other inconveniences. In fact our entire sales team is using the tool to increase their depth of product understanding and reduce dependence on the FAE team. — Lee, VP of Sales

That's not a small thing. In any company building novel hardware, there are a few people who carry the deep context. They're the ones who get pinged when someone can't parse a compilation log, or needs to understand what a property value actually controls. That knowledge is valuable and finite. An MCP that externalizes it means the experts spend less time answering the same questions and more time on the work only they can do.

Why Build Our Own

Generic MCP servers exist for things like GitHub, web search, and popular SaaS tools. Those aren't useful here, because the knowledge an AI agent needs to be effective with Quadric's stack isn't on the internet.

More specifically, it's spread across several distinct datasets, each with different structure, access patterns, and relevance. Each dataset needs to be queryable in context, and the context changes depending on what the user is doing. Access control adds another dimension.

No off-the-shelf MCP solution understands those boundaries. They'd have to be bolted on badly. Building our own meant access is enforced at the resource level, natively, as a first-class constraint rather than an afterthought.

Adding our MCP has reduced hallucinations regarding details of our stack nearly to 0. And maybe even more importantly, it arms every new member of our team with an expert in our stack with endless patience. Nothing we've built has been more useful for learning about how our stuff works and how our designers think. — Mike, Head of CGC

That second point matters for anyone scaling a team around specialized hardware. Onboarding is hard when our stack is novel by design. An AI agent that actually understands our architecture, our terminology, and our tools compresses ramp-up time in a way that documentation alone never has.

What the DevStudio MCP Exposes

The MCP server gives any connected AI agent three tools and a library of live resources drawn directly from DevStudio.

Tools the agent can call:

- Documentation search: full-text and semantic search across Quadric documentation. Ask a question in plain language and get back the relevant sections with links to the source pages.

- Model search: search the model library by name or operator type. Results are filtered to what your account is entitled to access. No guessing about operator support for your configuration.

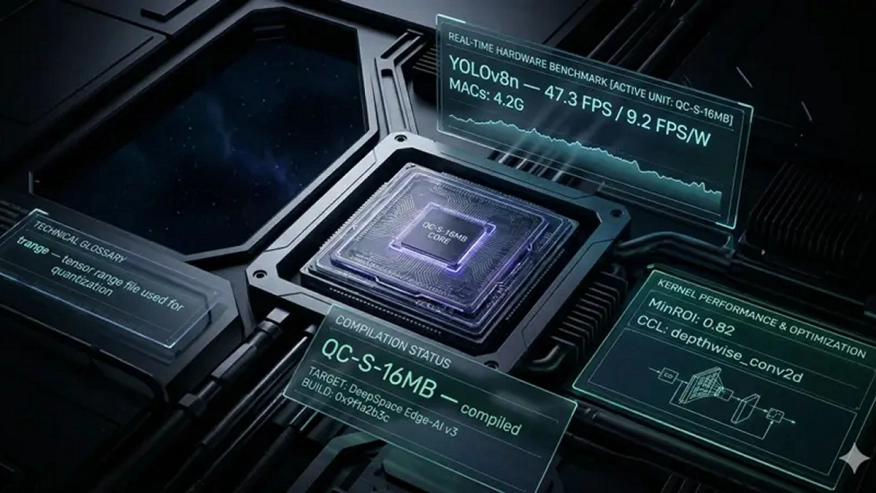

- Glossary lookup: resolve Quadric-specific terminology on demand. MinROI, trange, LRM, PE, GPNPU are terms with precise meanings in our stack that a general-purpose model will otherwise get wrong.

Resources available on demand:

- Model definitions: inputs, outputs, properties, and the operations they map to

- Performance benchmarks for any model against any compiled hardware target

- Detailed metrics: MACs, clock frequency, bandwidth, I/O tensor dimensions

- Compilation logs and hardware configuration status

- The complete benchmark table with FPS, FPS/W, and cycle counts across all targets

All of it is live. If you ran a compilation this morning, the results are queryable by the time you open your editor.

Explore NPU IP:

- GPNPU Processor IP - 32 to 864TOPs

- Safety Enhanced GPNPU Processor IP

- GPNPU Processor IP - 4 to 28 TOPs

- GPNPU Processor IP - 1 to 7 TOPs

The Workflows It Unlocks

Hardware target selection. "What's the best config for YOLOv8n at 30fps with minimum power draw?" The agent fetches the live benchmark table, compares FPS/W across compiled configurations, and explains the tradeoffs without you opening a browser.

Operator debugging. Compilation fails on an unsupported node. Paste the error. The agent searches the library, pulls the model definition, and tells you exactly what's missing: inputs, properties, the ONNX spec, before you've finished reading the log.

Code authoring. You need the right API for an RAU load pattern. The agent searches DevStudio docs live and returns the relevant section as formatted Markdown, inline in the conversation.

Cross-target performance comparison. "Compare FPS/W for MobileNetV2 across all compiled configs." Structured response, no spreadsheet, no tab-switching.

The common thread: interruptions that used to break your flow, whether to a colleague, a browser tab, or a search that leads to three more searches, collapse into a single in-editor exchange.

What MCP Gets Right and Where It Gets Complicated

MCP's tool model works well for discrete, query-driven interactions: search this, look up that, fetch this record. That covers a lot of ground and is where we see the clearest results internally.

The resource model is more nuanced. MCP defines a way to expose large sets of resources such as documentation pages, model definitions, and benchmark data, but the spec doesn't require AI agents to load those resources into context automatically. Whether an agent fetches a resource, when it decides to, and how much of it gets included is left to each agent's implementation. In practice, behavior varies significantly across AI vendors, sometimes in ways that aren't obvious until you test a specific workflow.

A documentation search that works seamlessly in one agent might require explicit prompting in another. Benchmark data that gets incorporated naturally in one context might be ignored in another. The MCP spec creates the plumbing; the agent's heuristics determine what actually flows through it.

This is a real limitation, and one the MCP ecosystem is still working through. We're iterating on how we structure and surface resources to work well across a range of agent implementations, but it's not a solved problem. Results today depend on which agent you're using and how it handles resources.

Terminology disambiguation is its own challenge. Standard LLM training data has definitions for terms like "PE," "tile," and "trange," just not our definitions. Getting the glossary to win against the model's prior requires careful attention to how results are surfaced and how the agent is guided to treat them as authoritative.

Where We Are

We're running this internally and finding it genuinely useful, particularly for documentation search, glossary resolution, and structured data lookups where the tool model maps cleanly onto the task. The results are uneven enough across agent implementations that we're running this internally and expanding access as we iterate.

If you're interested in where this is heading, as a potential customer or as an engineer who wants to work on problems like this, we'd like to hear from you.

Related Semiconductor IP

- GPNPU Processor IP - 32 to 864TOPs

- Safety Enhanced GPNPU Processor IP

- GPNPU Processor IP - 4 to 28 TOPs

- GPNPU Processor IP - 1 to 7 TOPs

- NPU

Related Blogs

- The Silent Guardian of AI Compute - PUFrt Unifies Hardware Security and Memory Repair to Build the Trust Foundation for AI Factories

- Advancing In-Memory Computing: A Global Effort to Build More Efficient AI Hardware

- Reimagining Chip Design - From Spec to Signoff with Cadence AI Super Agents

- Using AI to Accelerate Chip Design: Dynamic, Adaptive Flows

Latest Blogs

- AI in Design Verification: Where It Works and Where It Doesn’t

- PCIe 7.0 fundamentals: Baseline ordering rules

- Ensuring reliability in Advanced IC design

- A Closer Look at proteanTecs Health and Performance Management Solutions Portfolio

- Enabling Memory Choice for Modern AI Systems: Tenstorrent and Rambus Deliver Flexible, Power-Efficient Solutions