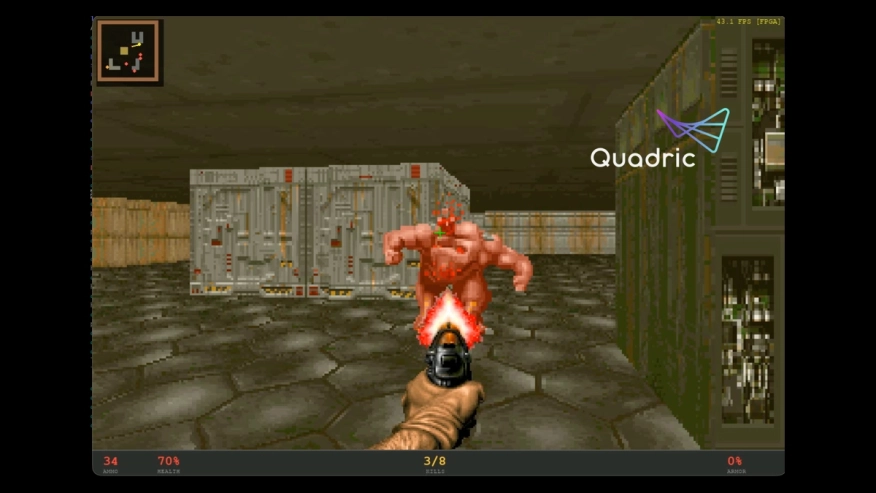

Can Your NPU Run DOOM? Chimera Can.

Is your NPU DOOMed? Quadric's Chimera GPNPU runs every AI model — and a complete DOOM engine. Find out why Quadric is different.

There's a reason "but can it run DOOM?" became the universal litmus test for any new piece of silicon. DOOM's renderer isn't a toy — it's a real-time system that demands raycasting, texture mapping, perspective-correct floor projection, dynamic lighting, depth-buffered sprite compositing, and a full palette-indexed shading pipeline. It requires branching, pointer arithmetic, trigonometry, irregular memory access patterns, and complex control flow — all executing under a hard frame-time deadline.

There's a reason "but can it run DOOM?" became the universal litmus test for any new piece of silicon. DOOM's renderer isn't a toy — it's a real-time system that demands raycasting, texture mapping, perspective-correct floor projection, dynamic lighting, depth-buffered sprite compositing, and a full palette-indexed shading pipeline. It requires branching, pointer arithmetic, trigonometry, irregular memory access patterns, and complex control flow — all executing under a hard frame-time deadline.

In other words, it requires a computer, not a matrix multiplier with a marketing department.

What John Carmack Actually Wrote

It's worth pausing on what the original DOOM engine actually looks like under the hood, because it tells you everything about why this workload is kryptonite for AI accelerators.

Carmack wrote DOOM in ANSI C, with performance-critical inner loops hand-tuned in x86 assembly. The target hardware was a 386 or 486 — machines that often didn't even have a floating-point unit. So the entire engine runs on 16.16 fixed-point integer arithmetic. No floats. No doubles. No FPU. id Software confirmed at the time that DOOM doesn't touch the math coprocessor at all. Adding a 387 to your 386 did exactly nothing for your framerate.

And there are no matrix multiplies anywhere in the renderer. Not one. The rendering pipeline is built entirely out of integer adds, shifts, comparisons, table lookups, and the occasional fixed-point multiply or divide. Walls are vertical columns of texture indexed by fixed-point coordinates. Floors are horizontal spans with per-row perspective lookups. Lighting is a 256-entry palette remap table (the COLORMAP) indexed by distance. Even distance calculations avoid square roots — Carmack used an octagonal approximation that's nothing but absolute values, a comparison, and a shift.

The computational vocabulary of DOOM is: adds, subtracts, shifts, bitwise ops, comparisons, table lookups, and scalar integer multiplies. No GEMMs. No convolutions. No matrix anything. Just a relentless stream of data-dependent scalar decisions running through a carefully orchestrated memory access pattern.

This is exactly the kind of workload that exposes whether a processor is a real computer or a purpose-built matrix multiplier wearing a general-purpose disguise.

What We Built on the GPNPU

We took the same computational DNA — fixed-point arithmetic, raycasting, column-based texture sampling, LUT-driven lighting, depth-buffered sprite compositing — and compiled a complete DOOM-style renderer as a single kernel targeting the Quadric Chimera GPNPU. Every pixel of a 224×168 frame is computed on-chip, in one shot. No host-side rendering. No frame decomposition across a CPU.

We didn't port DOOM. We wrote a renderer that speaks the same language DOOM speaks — the language of integer ALU, computed memory access, and data-dependent control flow — and proved that Chimera is fluent in it. The kernel completes a full 224×168 frame in 560K cycles on QC-N — at silicon clock speeds of 1GHz, that's ~1,785 frames per second. At 3nm, the entire chip runs at under 1W.

Why Other AI Accelerators Can't Even Attempt This

Most AI accelerators are systolic arrays bolted to a DMA engine. They stream large matrix multiplies through a fixed datapath. That's fine for the GEMM-dominated layers in CNN and transformer inference. But a raycasting renderer doesn't have a single matrix multiply in it.

What it does have: data-dependent branching where every ray terminates at a different step. Irregular, computed memory accesses where texture coordinates come from a runtime fixed-point multiply against the ray intersection point. Per-element scalar logic for depth comparison, transparency tests, and conditional overwrites. Multi-phase producer-consumer dependencies between the column prepass and the tile renderer.

On a typical NPU, these operations simply don't exist. There's no instruction for "branch based on this ray hitting a wall." There's no mechanism to compute a texture address at runtime and load a single byte from an arbitrary location in on-chip SRAM. The hardware wasn't designed to do this, the toolchain can't express it, and no amount of clever operator fusion will change that. The workload doesn't "fall back to the CPU" — it was never on the accelerator to begin with.

They Don't Have Real On-Chip Memory Autonomy

Our kernel loads the entire rendering context — map, three texture atlases, colormap, column cache, sprite metadata, and the output framebuffer — into under a megabyte of L2 memory. The GPNPU directly computes addresses and issues single-element loads through the RAU (Random Access Unit) — no DMA descriptors, no host intervention, no round-trips to DRAM mid-render.

Most NPU architectures treat on-chip SRAM as a scratchpad managed by a host-programmed DMA controller, tile by tile, layer by layer. Ask one of these chips to do a computed lookup into a 512×1024 texture atlas based on a runtime-calculated address and you'll get a blank stare from the hardware.

They Can't Express a Mega-Kernel

The entire renderer — raycasting, wall texturing, floor/ceiling projection, lighting, sprite compositing, framebuffer writeback — compiles and executes as a single GPNPU kernel invocation. This is only possible because Chimera's programming model gives you actual C++ — CCL (Chimera Compute Language) — compiled through our own LLVM backend targeting the GPNPU. You write C++ with SIMD semantics, fixed-point arithmetic, on-chip memory allocation, and random-access loads. The compiler generates native GPNPU machine code. Not a graph compiler mapping ONNX nodes to a fixed catalog of hardware kernels — a real compiler, for a real ISA.

There's no operator library to be limited by. There's no "unsupported op" error. If you can write it in C++, the GPNPU can run it. Try expressing a DDA raycaster as a sequence of Conv2D and MatMul ops. We'll wait.

Inside the Kernel

Here's what some of this actually looks like in CCL.

Branchless DDA Raycasting. The raycaster doesn't branch on wall hits. Instead, it computes all 32 candidate map cell addresses in a pure ALU phase, fetches them in a single batched RAU load, then finds the first hit via conditional-move accumulation:

// Phase 1: pure ALU — collect all 32 candidate addresses

for (std::int32_t step = 0; step < MAX_STEPS; ++step) {

auto stepInX = (sideDistX < sideDistY);

mapX = stepInX ? (mapX + stepDirX) : mapX;

mapY = stepInX ? mapY : (mapY + stepDirY);

crossDists[step] = stepInX ? sideDistX : sideDistY;

hitSides[step] = stepInX ? (std::int32_t)0 : (std::int32_t)1;

sideDistX = stepInX ? (sideDistX + deltaDistX) : sideDistX;

sideDistY = stepInX ? sideDistY : (sideDistY + deltaDistY);

// ... clamp and compute L2 address for map[mapY][mapX]

addrs[step] = rau::computeAddr<OcmMap>(z0, z0, gy, gx);

}

// Phase 2: one batched RAU load for all 32 cells

rau::config(ocmMap);

rau::load::tiles(addrs, cells, ocmMap, MAX_STEPS);

// Phase 3: branchless first-hit scan

for (std::int32_t step = 0; step < MAX_STEPS; ++step) {

auto wallHit = (cells[step] != 0) | (!inBounds);

auto firstHit = wallHit & (!hit);

perpDist = firstHit ? crossDists[step] : perpDist;

hitMeta = firstHit ? cells[step] : hitMeta;

hit = hit | firstHit;

}

Every lane in the SIMD array is casting its own ray through a different screen column, and every ray hits a different wall at a different step — but the control flow is uniform. No divergence, no serialization. This is the kind of pattern that a GEMM engine has no vocabulary for.

SIMD-Predicated Sprite Compositing. When compositing sprites, the kernel checks whether any SIMD lane in the current tile actually intersects the sprite before paying for a texture fetch:

auto inSprite = sprActive &

(myX >= left) & (myX < right) &

(myY >= top) & (myY < bottom);

if (!chimera::anyOf(inSprite)) continue;

// Only now do we fetch the texel and shade it

addrArr[0] = spriteTexelAddrFromBase(ocmSpriteTex, atlasBaseX, atlasBaseY, texU, texV);

rau::config(ocmSpriteTex);

rau::load::tiles(addrArr, texelArr, ocmSpriteTex, 1);

anyOf() reduces across the entire core array — it asks "does any core in this tile care about this sprite?" and skips the texture fetch, colormap lookup, and depth test entirely if the answer is no. Per-sprite, per-tile, zero wasted memory traffic.

Tile Rendering with Predication. The screen is decomposed into tiles matching the Chimera core array. Tiles entirely above or below the horizon skip the irrelevant plane — only tiles straddling the horizon pay for both floor and ceiling. Wall pixels are textured from a pre-computed column cache. Four directional lights are dotted against each wall's surface normal, summed with a distance fog term, and applied per-pixel — all in fixed-point, inline in the kernel.

So What?

Carmack built DOOM to run on the cheapest integer hardware available in 1993. Thirty-plus years later, the AI accelerator industry has spent billions of dollars on silicon that can't handle the same class of workload. Systolic arrays can't do it. Graph compilers can't express it. DMA-managed scratchpads can't address it.

Chimera can. The same chip that runs transformer attention and quantized convolutions at full throughput also runs a raycasting engine, because it was designed from the start to be a programmable computer — not a fixed-function GEMM accelerator with an SDK that says "general purpose" on the box.

Your NPU runs neural networks. Ours runs programs.

And yes — it runs DOOM. IDKFA.

The Quadric GPNPU (General-Purpose Neural Processing Unit) is built on the Chimera architecture with native C++ programmability via CCL and a custom LLVM backend. To learn more about deploying real workloads — neural or otherwise — on Chimera silicon, contact us.

Related Semiconductor IP

- GPNPU Processor IP - 32 to 864TOPs

- Safety Enhanced GPNPU Processor IP

- GPNPU Processor IP - 4 to 28 TOPs

- GPNPU Processor IP - 1 to 7 TOPs

- NPU

Related Blogs

- Heterogeneous NPU Data Movement Tax: Intel's Own Slides Tell the Story

- Arm and Arteris IP present AI NPU and ISO 26262 integration together at ICCAD China

- Arm Ethos-N78 NPU: Unprecedented Machine Learning Capability at your Fingertips

- Designing Smarter Edge AI Devices with the Award-Winning Synopsys ARC NPX6 NPU IP

Latest Blogs

- A Bench-to-In-Field Telemetry Platform for Datacenter Power Management

- IDS-Verify™: From Specification to Sign-Off – Automated CSR, Hardware Software Interface and CPU-Peripheral Interface Verification

- RISC-V and GPU Synergy in Practice: A Path Towards High-Performance SoCs from SpacemiT K3

- EDA AI Agents: Intelligent Automation in Semiconductor & PCB Design

- Why Security Can't Exist Without Trust