Performance Efficiency AI Accelerator

The NeuroMosaic Processor (NMP) family is shattering the barriers to deploying ML by delivering a general-purpose architecture an…

Overview

The NeuroMosaic Processor (NMP) family is shattering the barriers to deploying ML by delivering a general-purpose architecture and simple programmer’s model to enable virtually any class of neural network architecture and use case.

Our unique differentiation starts with the ability to simultaneously execute multiple AI/ML models significantly expanding the realm of capability over existing approaches. This game-changing advantage is provided by the co-developed NeuroMosAIc Studio software’s ability to dynamically allocate HW resources to match the target workload resulting in highly optimized, low-power execution. The designer may also select the optional on-device training acceleration extension enabling iterative learning post-deployment. This key capability cuts the cord to cloud dependence while elevating the accuracy, efficiency, customization, and personalization without reliance on costly model retraining and deployment, thereby extending device lifecycles.

Key features

- Up to 6 TOPS

- Up to 6 MB Local Memory

- RISC-V/Arm Cortex-M or A 32-bit CPU

- 3 x AXI4, 128b (Host, CPU & Data)

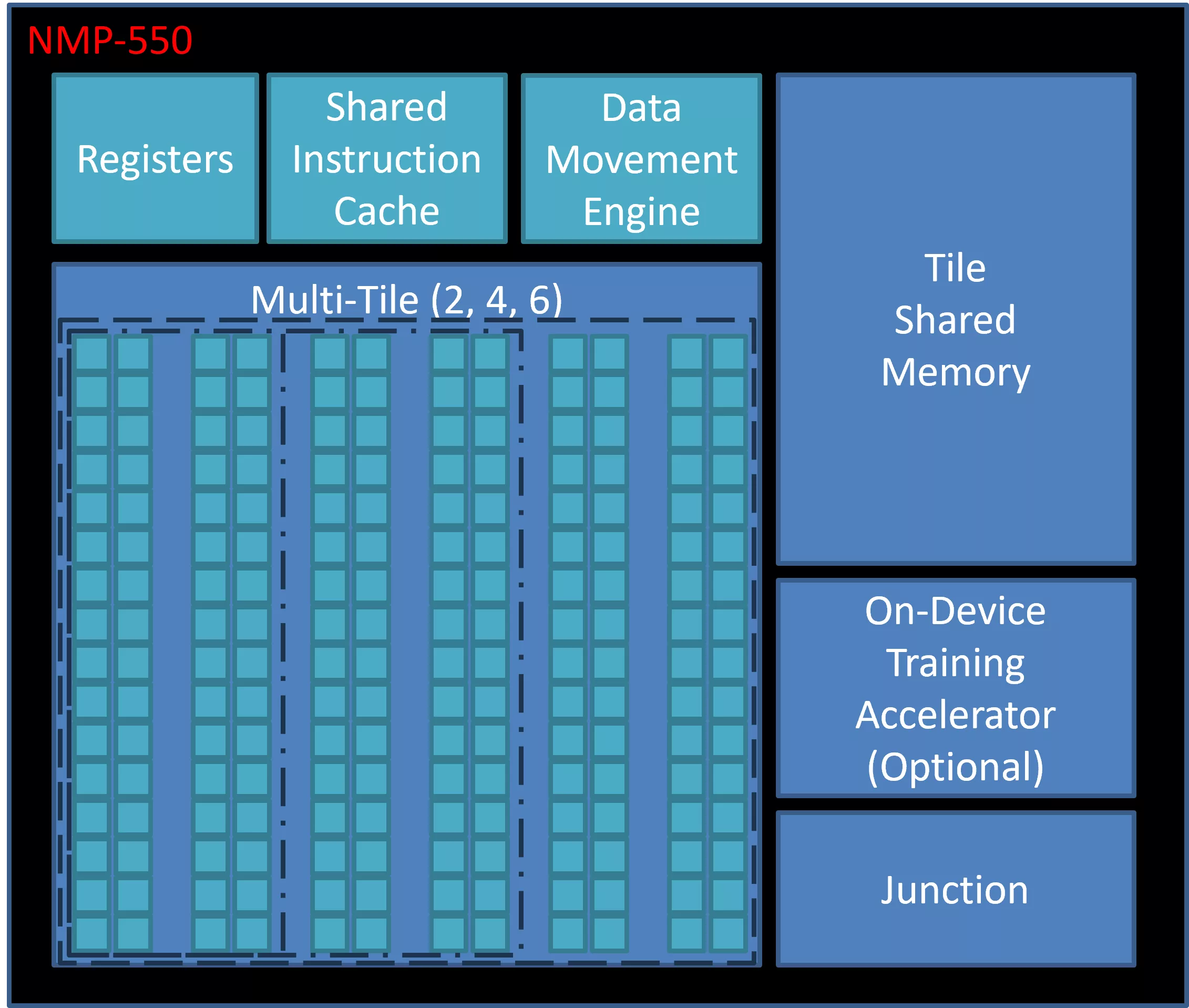

Block Diagram

Benefits

- The NMP-550 is a significant leap forward for mid- to high-end mobile and edge computing systems requiring ultimate performance efficiency. AI acceleration extends to 6 TOPS while delivering an industry-leading 40 TOPS/W. Numerous architectural advances result in higher convolution throughput and 2x compute density while lowering total area by 25%. The addition of MISH and SWISH activation function support extends efficiency while an upgraded RISC-V controller delivers 4X initialization and post-processing performance over the NMP-500. Alternatively, designers may elect to use the Arm® Cortex®-M or Cortex-A for further flexibility and software extension.

- The patented and co-developed hardware and software architecture enables end-user flexibility to mold multiple models to the accelerator resources to achieve simultaneous, sequential or event-based requirements.

- Three configuration options allow the designer to scale back compute and memory resources to further optimize for area and power in highly constrained devices.

Applications

- Driver Monitoring and Fleet Management

- Image / Video Analytics and Super Resolution

- Intruder, Safety, and Compliance

Files

Note: some files may require an NDA depending on provider policy.

Specifications

Identity

Provider

Learn more about Edge AI Accelerator IP core

Using edge AI processors to boost embedded AI performance

The Industry’s First USB4 Device IP Certification Will Speed Innovation and Edge AI Enablement

Accelerating Your Development: Simplify SoC I/O with a Single Multi-Protocol SerDes IP

IoT Was Interesting, But Follow the Money to AI Chips

Designing Energy-Efficient AI Accelerators for Data Centers and the Intelligent Edge

Frequently asked questions about Edge AI Accelerator IP cores

What is Performance Efficiency AI Accelerator?

Performance Efficiency AI Accelerator is a Edge AI Accelerator IP core from AiM Future, Inc. listed on Semi IP Hub.

How should engineers evaluate this Edge AI Accelerator?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Edge AI Accelerator IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.