RISC-V-Based, Open Source AI Accelerator for the Edge

Coral NPU is a machine learning (ML) accelerator core designed for energy-efficient AI at the edge.

Overview

Coral NPU is a machine learning (ML) accelerator core designed for energy-efficient AI at the edge. Based on the open hardware RISC-V ISA, it is available as validated open source IP, for commercial silicon integration.

Coral NPU's open-source strategy aims to create a standard architecture to accelerate the edge AI ecosystem and is based on the prior Google Research's effort, Coral.ai. First released in 2023 as a component of the "Open Se Cura" research project, it is now a dedicated initiative to drive this vision forward.

The Problem Coral NPU Solves

Coral NPU directly addresses the significant fragmentation in the edge AI device ecosystem. Developers currently face a steep learning curve and major programming complexity because programming models differ between separate general-purpose (CPU) and ML compute blocks. These ML blocks often rely on command buffers generated by specialized, proprietary compilers. This fragmented approach makes it difficult to combine the strengths of each compute unit and forces developers to manage multiple proprietary and opaque toolchains for bespoke architectures.

Coral NPU is built on the RISC-V ISA standard, extending the C programming environment with native tensor processing capabilities. It supports multiple machine learning frameworks including: JAX, PyTorch, and TensorFlow Lite (TFLite) using open standards based tools like Multi-Level Intermediate Representation (MLIR) from the Low Level Virtual Machine (LLVM) project for compiler infrastructure.

This integration of native ML acceleration primitives with a general purpose computing ISA, delivers high ML performance without the usual system complexity, cost, and data movement associated with separate, proprietary CPU/NPU designs.

Key features

Coral NPU's design is driven by several key principles:

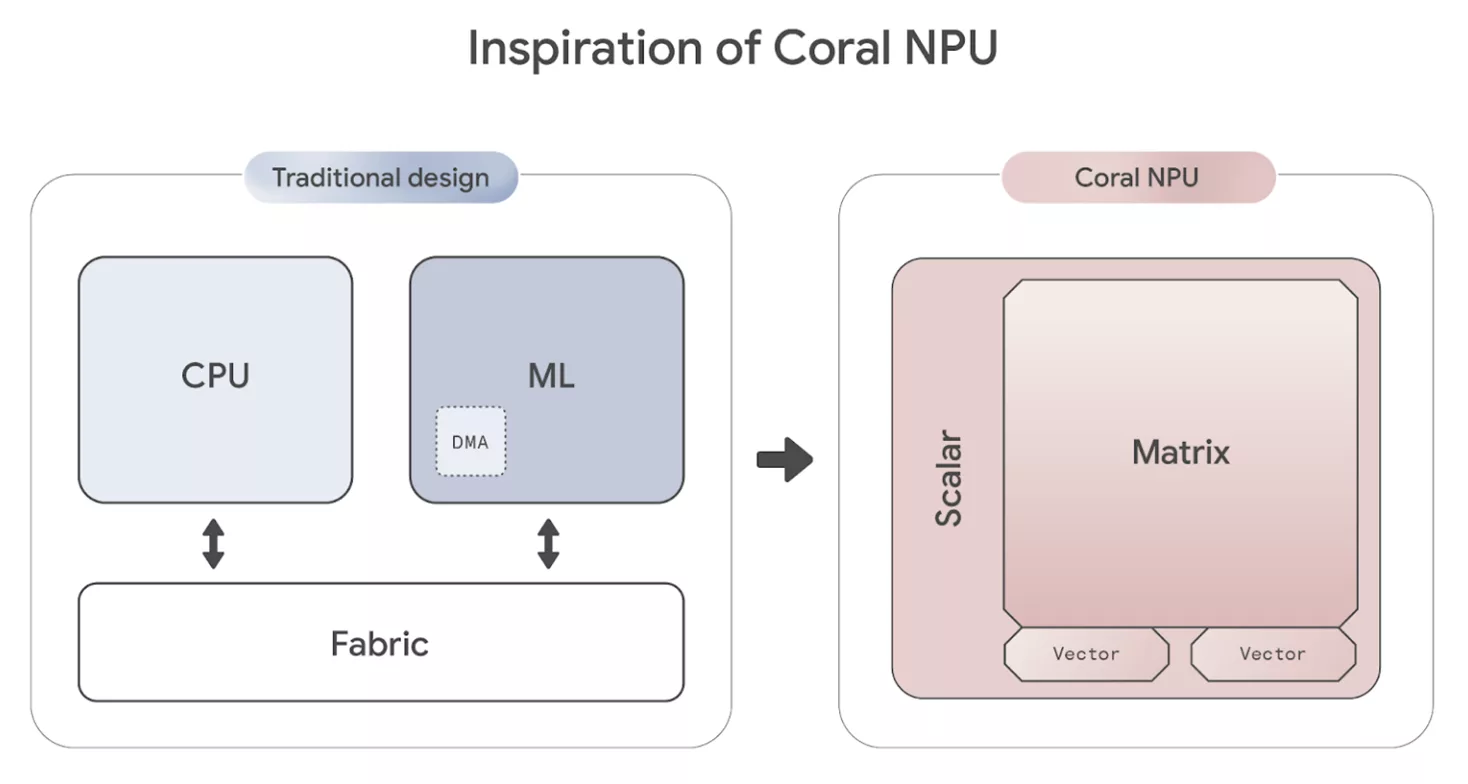

- ML-First Architecture: Coral NPU reverses the traditional processor design. Instead of starting with basic scalar computing, then adding vector (SIMD) and finally matrix capabilities, Coral NPU is built with matrix (ML) capabilities first, then integrates vector and scalar functions. This tight integration of scalar/vector/matrix in a single ISA approach optimizes the entire architecture for AI workloads from its foundation. (more details in the Architecture overview)

- Dedicated ML Engine: At the center of the design is a quantized outer product multiply-accumulate (MAC) engine, purpose-built for the fundamental calculations of neural networks. This specialized core processes 8-bit operations into 32-bit results with extreme efficiency.

- Integrated Vector (SIMD) Core: The vector co-processor implements the RISC-V Vector Instruction Set (RVV) v1.0, using a 32 x 256 bit vector register file and a "strip-mining" mechanism where a single instruction triggers multiple operations, significantly boosting efficiency.

- Simple, C-Programmable Scalar Core: A lightweight RISC-V (RV32IM) frontend acts as a simple controller, managing and feeding the powerful Matrix and Vector backend. This core is designed for a "run-to-completion" model, meaning it doesn't require a complex operating system or frequent interrupts, contributing to its ultra-low power consumption.

- Efficient Memory Management: Coral NPU uses a single layer of small, fast cache (8KB for instructions, 16KB for data) to keep data close to the processing units, minimizing power and latency.

- Unified Developer Experience: The platform is C-programmable and designed for easy integration with modern ML compilers like TensorFlow Lite Micro (TFLM) and IREE. This allows a unified, MLIR-based toolchain to support models from major frameworks like TensorFlow, JAX, and PyTorch.

Block Diagram

Benefits

Coral NPU's design delivers a highly efficient balance of power and performance making it ideal for ambient applications and scalable to multicore setups.

Representative numbers:

- Performance: targeting 512 GOP/S (Giga Operations Per Second) with 256 MACs/cycle

- Power Objective: targeting ~6mW at 800MHz, using 22nm

More specific PPA metrics made available by commercial silicon partners.

Applications

Coral NPU is designed to enable ultra-low-power, always-on edge AI applications, particularly focused on ambient sensing systems. Its primary goal is to enable all day AI-experiences on wearable devices minimizing battery usage.

Potential Use Cases

- Contextual Awareness: Detecting user activity (e.g., walking, running), proximity, or environment (e.g., indoors/outdoors, on-the-go) to enable "do-not-disturb" modes or other context-aware features.

- Audio Processing: Voice and speech detection, keyword spotting, live translation, transcription, and audio-based accessibility features.

- Image Processing: Person and object detection, facial recognition, gesture recognition, and low-power visual search.

- User Interaction: Enabling control via hand gestures, audio cues, or other sensor-driven inputs.

Ideal Device Categories

Coral NPU's combination of high efficiency and low power makes it ideal for a wide range of hardware:

- Hearables and smart earbuds

- Smart glasses and AR headsets

- Smartwatches and fitness trackers

- Smart home and ambient IoT devices

- Mobile phones (for ultra-low-power co-processing)

- Automotive and in-vehicle systems

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about Edge AI Accelerator IP core

Using edge AI processors to boost embedded AI performance

The Industry’s First USB4 Device IP Certification Will Speed Innovation and Edge AI Enablement

Accelerating Your Development: Simplify SoC I/O with a Single Multi-Protocol SerDes IP

IoT Was Interesting, But Follow the Money to AI Chips

Designing Energy-Efficient AI Accelerators for Data Centers and the Intelligent Edge

Frequently asked questions about Edge AI Accelerator IP cores

What is RISC-V-Based, Open Source AI Accelerator for the Edge?

RISC-V-Based, Open Source AI Accelerator for the Edge is a Edge AI Accelerator IP core from VeriSilicon Microelectronics (Shanghai) Co., Ltd. listed on Semi IP Hub.

How should engineers evaluate this Edge AI Accelerator?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Edge AI Accelerator IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.