Neuromorphic Processor IP

License our analog in-memory compute macros (e.g., 32×32 X1 crossbars) for integration into your ASIC or SoC.

Overview

License our analog in-memory compute macros (e.g., 32×32 X1 crossbars) for integration into your ASIC or SoC.

Key features

- In-Memory Compute: Efficient analog MACs for AI workloads

- Compact Footprint: 0.28 mm² including peripheral circuitry

- Wishbone Interface: Easy integration with standard digital buses

- Ready for Tapeout: Fully synthesized and foundry-compatible

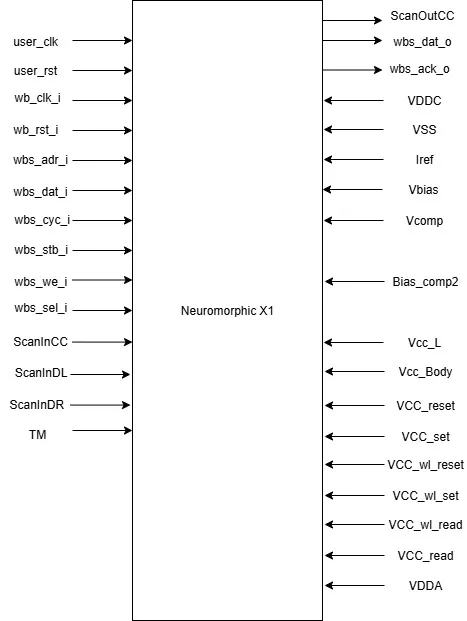

Block Diagram

Benefits

Neuromorphic X1 is a compact and efficient analog in-memory compute macro designed for next-generation edge AI applications. Built on a 32×32 1T1R crossbar array, it leverages analog weights to perform multiply-accumulate operations directly in memory, minimizing data movement and maximizing energy efficiency.

With integrated decoders and sense amplifiers, the X1 macro delivers 1kb of analog weight storage in a compact 0.28 mm² area. Its Wishbone bus compatibility ensures seamless integration into digital SoCs, including Caravel-based platforms.

Applications

- Neuromorphic X1 enables AI processing at the edge with ultra-low power and area, making it ideal for sensor-rich, power-constrained environments.

Files

Note: some files may require an NDA depending on provider policy.

Specifications

Identity

Provider

Learn more about Edge AI Accelerator IP core

Using edge AI processors to boost embedded AI performance

The Industry’s First USB4 Device IP Certification Will Speed Innovation and Edge AI Enablement

Accelerating Your Development: Simplify SoC I/O with a Single Multi-Protocol SerDes IP

IoT Was Interesting, But Follow the Money to AI Chips

Designing Energy-Efficient AI Accelerators for Data Centers and the Intelligent Edge

Frequently asked questions about Edge AI Accelerator IP cores

What is Neuromorphic Processor IP?

Neuromorphic Processor IP is a Edge AI Accelerator IP core from BM Labs listed on Semi IP Hub.

How should engineers evaluate this Edge AI Accelerator?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Edge AI Accelerator IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.