Embedded AI accelerator IP

The GenAI IP is the smallest version of our NPU, tailored to small devices such as FPGAs and Adaptive SoCs, where the maximum Fre…

Overview

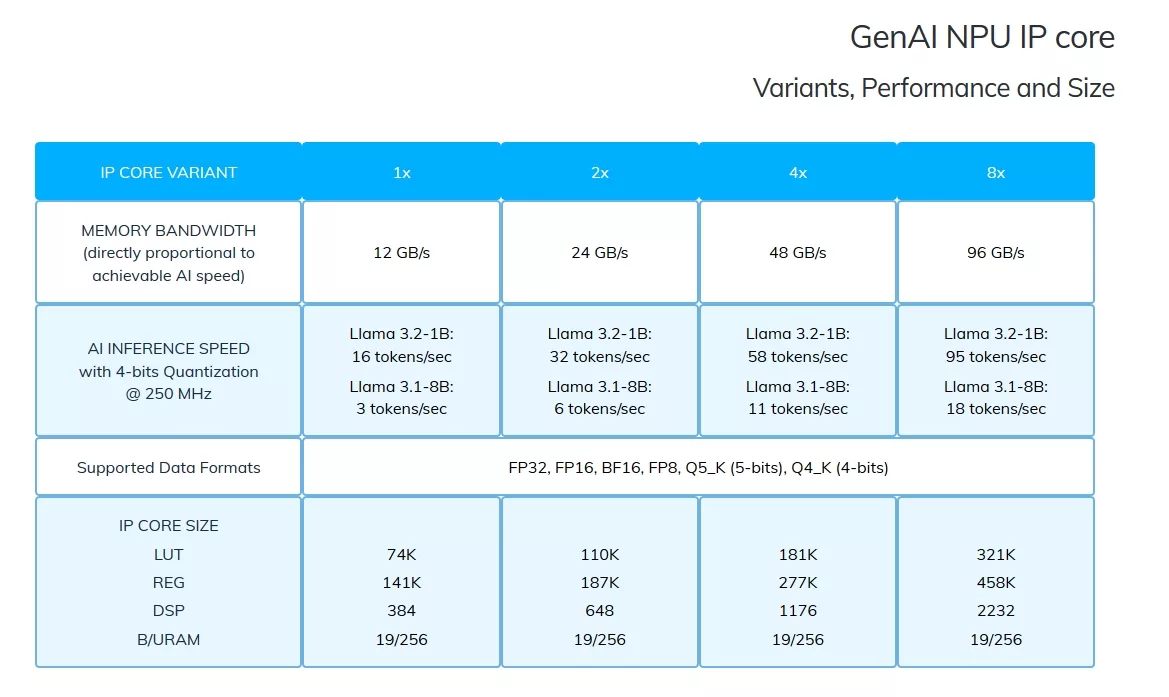

The GenAI IP is the smallest version of our NPU, tailored to small devices such as FPGAs and Adaptive SoCs, where the maximum Frequency is limited (<=250 MHz) and Memory Bandwidth is lower (<=100 GB/s).

Key features

- Fully reprogrammable solutions: Flexible updates and adjustments, ensuring maximum technological adaptability.

- Autonomy in remote environments: Stable operation, forget variable connectivity latency and availability.

- Offline Generative AI: Designed to operate independently: no security breaches, no subscriptions, no dependencies.

- Any AI models, plus yours: Run commercially licensed as well as open-source LMs, or deploy fine-tuned/post-trained versions tailored to your specific needs.

Block Diagram

Files

Note: some files may require an NDA depending on provider policy.

Specifications

Identity

Provider

Learn more about Edge AI Accelerator IP core

Using edge AI processors to boost embedded AI performance

The Industry’s First USB4 Device IP Certification Will Speed Innovation and Edge AI Enablement

Accelerating Your Development: Simplify SoC I/O with a Single Multi-Protocol SerDes IP

IoT Was Interesting, But Follow the Money to AI Chips

Designing Energy-Efficient AI Accelerators for Data Centers and the Intelligent Edge

Frequently asked questions about Edge AI Accelerator IP cores

What is Embedded AI accelerator IP?

Embedded AI accelerator IP is a Edge AI Accelerator IP core from RaiderChip listed on Semi IP Hub.

How should engineers evaluate this Edge AI Accelerator?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Edge AI Accelerator IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.