NPU IP for Data Center and Automotive

The VIP9400 processing family offers programmable, scalable and extendable solutions for markets that demand real time and AI dev…

Overview

The VIP9400 processing family offers programmable, scalable and extendable solutions for markets that demand real time and powerful AI devices. VIP9400 Series’ patented Neural Network engine and Tensor Processing Fabric deliver superb neural network inference performance with industry-leading power efficiency (TOPS/W) and area efficiency (mm2/W). The VIP9400’s scalable architecture can provide 80TOPS computing ability, enables AI for data center and automotive application.

In addition to neural network acceleration, VIP9400 Series are equipped with Parallel Processing Units (PPUs), which provide full programmability along with conformance to OpenCL 1.2 and OpenVX 1.2.

Key features

- Programmable Engines (PPU) & NN Engine

- 128-bit vector processing unit (shader + ext)

- OpenCL 1.2 shader instruction set

- Enhanced vision instruction set (EVIS)

- INT 8/16/32b, Float 16/32b in PPU

- Convolution layers

- INT8 with INT16, Float16, or BFloat16 variant in NN

- Tensor Processing Fabric

- Non-convolution layers

- Multi-lane processing for data shuffling, normalization, pooling/unpooling, LUT, etc.

- Network pruning support, zero skipping, compression

- On-chip SRAM for DDR BW saving

- Accepts INT 8/16b, BFloat16, Float16

- Unified Programming Model

- OpenCL, OpenVX

- Parallel processing between PPU and NN HW accelerators with priority setting

- Supports popular vision and deep learning frameworks: OpenCV, Caffe, Caffe2, TensorFlow, TensorFlowLite, ONNX, PyTorch, MXnet, Cognitive Toolkit, PaddlePaddle, Keras

- SW & Tools

- ACUITY: End-to-end Neural Network development tool

- Eclipse-based IDE for coding/debugging/Profiling

- Linux and Android NN API runtime support

- Task specific engines speed up for commonly used AI apps

- Scalability

- Number of PPU and NN cores can be configured independently

- Same OpenVX/OpenCL code runs on all processor variants; scalable performance

- Extendibility

- FLEXA API: Easy integration with other VSI IPs

- Reconfigurable EVIS for user to define own instructions

- VIP-ConnectTM: HW and SW I/F protocol to plug in customer HW accelerators and expose functionality via CL/VX custom kernels

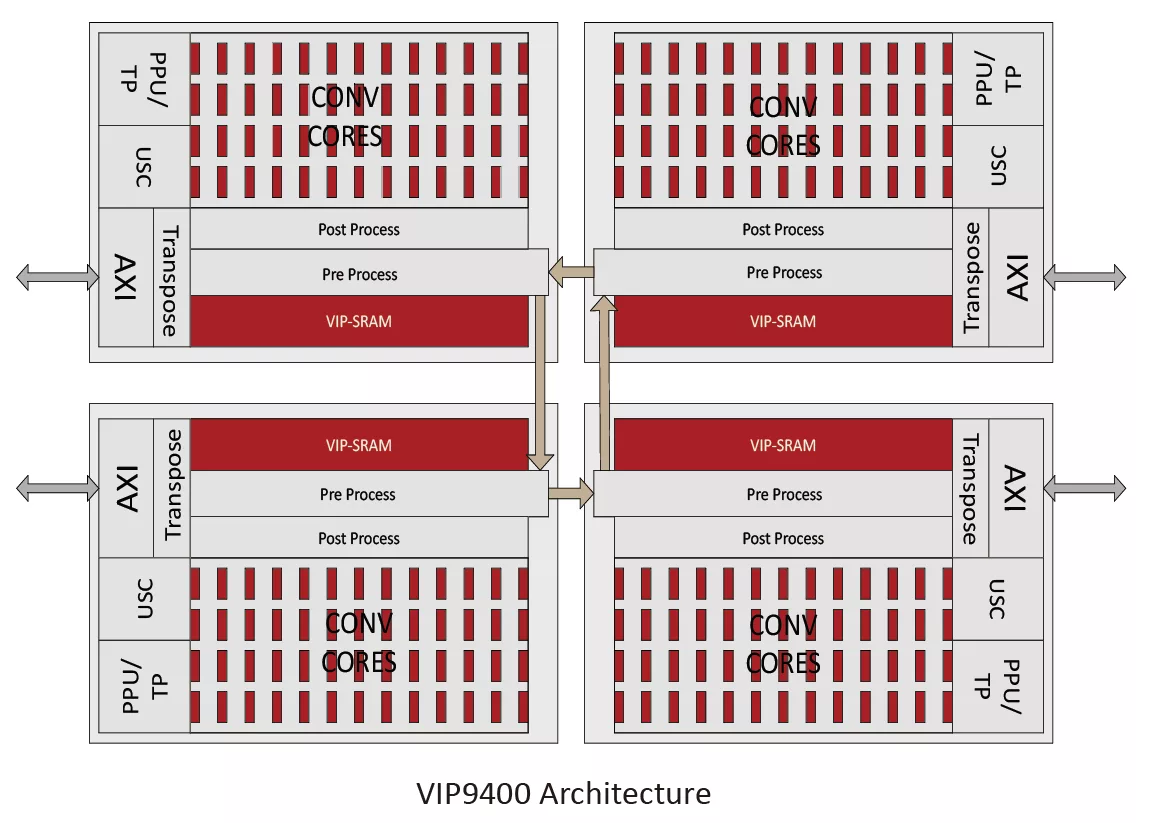

Block Diagram

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about NPU IP core

Heterogeneous NPU Data Movement Tax: Intel's Own Slides Tell the Story

The Upcoming NPU Shakeout

One Instruction Stream, Infinite Possibilities: The Cervell™ Approach to Reinventing the NPU

Legacy IP Providers Struggle to Solve the NPU Dilemna

Can You Rely Upon your NPU Vendor to be Your Customers' Data Science Team?

Frequently asked questions about NPU IP cores

What is NPU IP for Data Center and Automotive?

NPU IP for Data Center and Automotive is a NPU IP core from VeriSilicon Microelectronics (Shanghai) Co., Ltd. listed on Semi IP Hub.

How should engineers evaluate this NPU?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this NPU IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.