4-/8-bit mixed-precision NPU IP

Features a optimized network model compiler that reduces DRAM traffic from intermediate activation data by grouped layer partitio…

Overview

Features a highly optimized network model compiler that reduces DRAM traffic from intermediate activation data by grouped layer partitioning and scheduling. ENLIGHT is easy to customize to different core sizes and performance for customers' targeted market applications and achieves significant efficiencies in size, power, performance, and DRAM bandwidth, based on the industry's first adoption of 4-/8-bit mixed-quantization.

Performs various operations of deep neural networks such as convolution, pooling, and non-linear activation functions for edge computing environments. This NPU IP far surpasses alternative solutions, delivering unparalleled compute density with energy efficiency (power, performance, and area).

Key features

- Mixed-Precision (4/8-bit) Computation: Higher efficiency in PPAs and DRAM bandwidth

- Deep Neural Network (DNN)-optimized Vector Engine: Better adaptation to future DNN changes

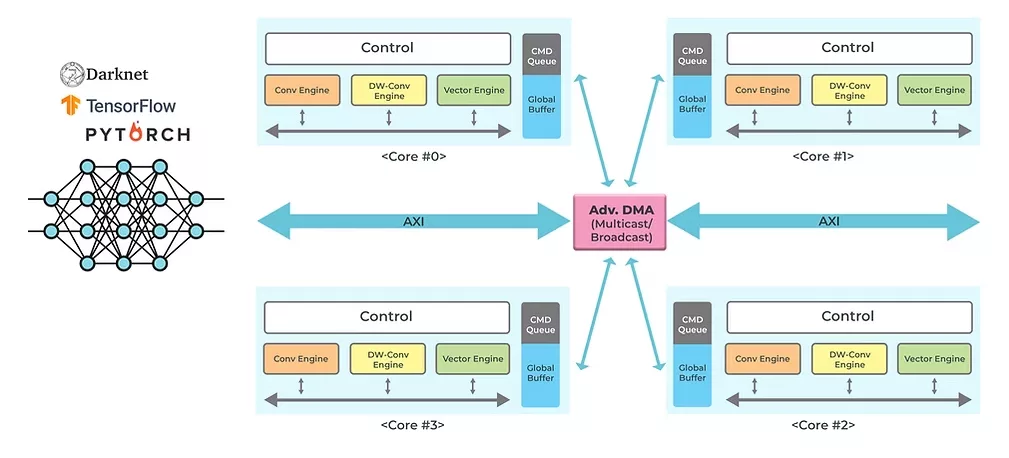

- Scale-out w/ Multi-core: Even higher performance by parallel processing of DNN layers

- Modern DNN Algorithm Support: Depth-wise convolution, feature pyramid network (FPN), swish/mish activation, etc.

- High-level Inter-layer Optimization: Grouped layer partitioning and scheduling for reducing DRAM traffic from intermediate data

- DNN-layers Parallelization: Efficiently utilize multi-core resources for higher performance & optimize data movements among cores

- Aggressive Quantization: Maximize use of 4-bit computation capability

Block Diagram

Benefits

- NN Converter

- Converts a network file into internal network format (.enlight)

- Supports ONNX (PyTorch), TF-Lite, and CFG (Darknet)

- NN Quantizer

- Generates quantized network: float to 4-/8-bit integer

- Supports per-layer quantization of activation and per-channel quantization of weight

- NN Simulator

- Evaluates full precision network and quantized network

- Estimates accuracy loss due to quantization

- NN Compiler

- Generates NPU handling code for target architecture and network

Applications

- Person, vehicle, bike, traffic sign detection

- Parking lot vehicle location detection & recognition

- License plate detection & recognition

- Detection, tracking, and action recognition for surveillance

What’s Included?

- RTL design for synthesis

- SW toolkits and device driver

- User guide

- Integration guide

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about NPU IP core

Heterogeneous NPU Data Movement Tax: Intel's Own Slides Tell the Story

The Upcoming NPU Shakeout

One Instruction Stream, Infinite Possibilities: The Cervell™ Approach to Reinventing the NPU

Legacy IP Providers Struggle to Solve the NPU Dilemna

Can You Rely Upon your NPU Vendor to be Your Customers' Data Science Team?

Frequently asked questions about NPU IP cores

What is 4-/8-bit mixed-precision NPU IP?

4-/8-bit mixed-precision NPU IP is a NPU IP core from OPENEDGES Technology, Inc. listed on Semi IP Hub.

How should engineers evaluate this NPU?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this NPU IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.