LLM AI IP Core

For sophisticated workloads, DeepTransformCore is optimized for language and vision applications.

Overview

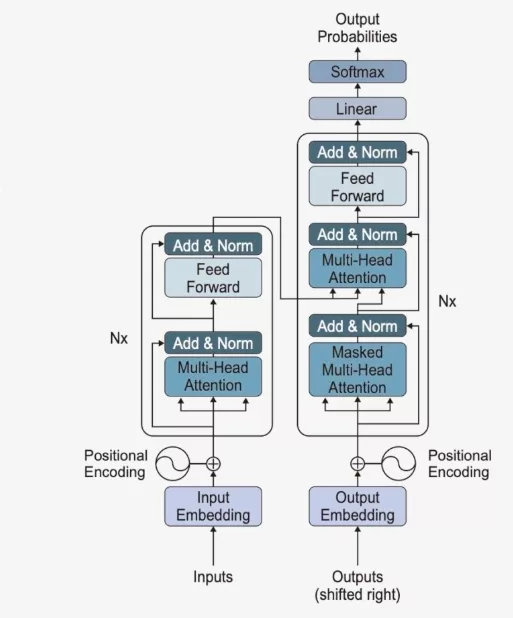

For sophisticated workloads, DeepTransformCore is optimized for language and vision applications. Supporting both encoder and decoder transformer architectures with flexible DRAM configurations and FPGA compatibility, DeepTransformCore eliminates complex software integration burdens, empowering customers to rapidly develop custom AI SoC (System-on-chip) designs with unprecedented efficiency.

Block Diagram

Benefits

- speech-to-speech multimodal

- Response Latency within 2 seconds

- flexible DRAM configurations and FPGA compatibility

- Supports DeepMentor Exclusive Low-distortion Training Models

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about NPU IP core

Heterogeneous NPU Data Movement Tax: Intel's Own Slides Tell the Story

The Upcoming NPU Shakeout

One Instruction Stream, Infinite Possibilities: The Cervell™ Approach to Reinventing the NPU

Legacy IP Providers Struggle to Solve the NPU Dilemna

Can You Rely Upon your NPU Vendor to be Your Customers' Data Science Team?

Frequently asked questions about NPU IP cores

What is LLM AI IP Core?

LLM AI IP Core is a NPU IP core from DeepMentor listed on Semi IP Hub.

How should engineers evaluate this NPU?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this NPU IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.