NPU IP Core for Data Center

High Performance Scalability across Complex Models Cloud-based AI inference is the backbone of retail, e-commerce, healthcare, in…

Overview

High Performance Scalability across Complex Models

Cloud-based AI inference is the backbone of retail, e-commerce, healthcare, industry 4.0, gaming, and many other applications. The highly varied needs of these applications mandates that AI inference solutions provide support for a large number of varied neural networks.

High Performance, High Scalability for Today and Tomorrow's Needs

Origin Evolution™ for Data Center offers out-of-the-box compatibility with popular LLM and CNN networks. Attention-based processing optimization and advanced memory management ensure optimal AI performance across a variety of today’s standard and emerging neural networks. Featuring a hardware and software co-designed architecture, Origin Evolution for Data Center scales to 128 TFLOPS in a single core, with multi-core performance to PetaFLOPs.

Application and Model Flexibility

Data Centers are the backbone of many popular AI deployments, including chatbots, coding assistants, predictive maintenance, intelligent analysis tools, and content moderation. This diverse usage mandates that data center AI inference solutions support the widest variety of networks in the most power and performance-friendly method possible. AI Inference solutions must have the flexibility to support today's popular networks while maintaining the flexibility to support newer, larger networks.

Innovative Architecture

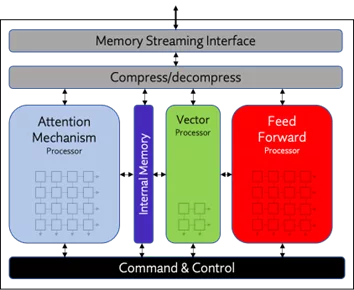

Origin Evolution uses Expedera’s unique packet-based architecture to achieve unprecedented NPU efficiency. Packets, which are contiguous fragments of neural networks, are an ideal way to overcome the hurdle of large memory movements and differing network layer sizes, which are exacerbated by LLMs. Packets are routed through discrete processing blocks, including Feed Forward, Attention, and Vector, which accommodate the varying operations, data types, and precisions required when running different LLM and CNN networks. Origin Evolution includes a high-speed external memory streaming interface that is compatible with the latest DRAM and HBM standards.

Ultra-Efficient Neural Network Processing

Accepting standard, custom, and black box networks in a variety of AI representations, Origin Evolution offers a wealth of user features such as mixed precision quantization. Expedera’s unique packet-based processing reduces much larger networks into smaller, contiguous fragments, overcoming the hurdle of large memory movements and offering much higher processor utilization. Packets are routed through discrete processing blocks, including Feed Forward, Attention, and Vector, which accommodate the varying operations, data types, and precisions required when running different types of networks. Internal memory handles intermediate needs, while the memory streaming interface interfaces with off-chip storage.

Specifications

| Compute Capacity | up to 64K FP16 MACs |

| Multi-tasking | Run Simultaneous Jobs |

| Example Networks Supported | Llama2, Llama3, ChatGLM, DeepSeek, Mistral, Qwen, MiniCPM, Yolo, MobileNet, and many others, including proprietary/black box networks |

| Example Performance | 575 tokens per second, DeepSeek v3 Prompt Processing, 64 TFLOPS engine, 8MB internal memory, 256GB external peak bandwidth. Specified in TSMC 7nm, 1 GHz system clock, no sparsity/compression/pruning applied (though supported) |

| Layer Support | Standard NN functions, including Transformers, Conv, Deconv, FC, Activations, Reshape, Concat, Elementwise, Pooling, Softmax, others. Support for custom operators. |

| Data types | FP16/FP32/INT4/INT8/INT10/INT12/INT16 Activations/Weights |

| Quantization | Software toolchain supports Expedera, customer-supplied, or third-party quantization. Mixed precision supported. |

| Latency | Deterministic performance guarantees, no back pressure |

| Frameworks | Hugging Face, Llama.cpp, PyTorch, TVM, ONNX. Tensor Flow and others supported |

Key features

- 128 TFLOPS performance

- Support for standard, custom, and proprietary neural networks

- Readily customized for specific use cases and deployment needs

- Full software stack provided, including compiler, estimator, scheduler, and quantizer

- Runs LLM, CNN and other network types

- Delivered as Soft IP (RTL) or GDS

Block Diagram

Benefits

- Choose the Features You Need: Customization brings many advantages, including increased performance, lower latency, reduced power consumption, and eliminating dark silicon waste. Expedera works with data center chip designers to understand and optimize to their use case(s), PPA goals, and deployment needs during their design stage. Using this information, we configure Origin Evolution to create a customized solution that perfectly fits the application.

- Reducing Memory Bandwidth: Origin Evolution's packet-architecture reduces memory requirements of popular LLMs like Llama 3.2 and Qwen1 by as much as 79%, saving system power and offering a much better utilized processor.

- Efficient Resource Utilization: Origin Evolution for Data Center scales to 128 TFLOPS in a single core, eliminating the memory sharing, security, and area penalty issues faced by lower-performing, tiled AI accelerator engines.

- Full Software Stack: Origin Evolution employs an easy-to-use software stack that allows the importing of trained networks from popular representations such as Hugging Face, Llama.cpp, PyTorch, TVM, ONNX, TensorFlow, and others, while providing various quantization options, automatic completion, compilation, estimator and profiling tools. It also supports multi-job APIs.

- LLM, CNN, and other Network Support: Origin Evolution offers out-of-the-box support for 100+ popular neural networks, including Llama2, Llama3, ChatGLM, DeepSeek, Mistral, Qwen, MiniCPM, Yolo, MobileNet, and many others.

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about NPU IP core

Heterogeneous NPU Data Movement Tax: Intel's Own Slides Tell the Story

The Upcoming NPU Shakeout

One Instruction Stream, Infinite Possibilities: The Cervell™ Approach to Reinventing the NPU

Legacy IP Providers Struggle to Solve the NPU Dilemna

Can You Rely Upon your NPU Vendor to be Your Customers' Data Science Team?

Frequently asked questions about NPU IP cores

What is NPU IP Core for Data Center?

NPU IP Core for Data Center is a NPU IP core from Expedera listed on Semi IP Hub.

How should engineers evaluate this NPU?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this NPU IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.