AI inference processor IP

Configurable AI inference processor IP, which can optimize the performance and size and process all data such as images, videos, …

Overview

Configurable AI inference processor IP, which can optimize the performance and size and process all data such as images, videos, and sounds on the edge side where real-time property, safety, privacy protection etc. are required.

Key features

- High precision

- DV700 Series whose computing unit supports FP16-precision floating point arithmetic as standard can be used without re-training AI models trained on PCs and cloud servers. Maintaining high inference precision, it is an ideal AI processor IP for AI systems that require high reliability such as autonomous driving and robotics.

- Compatible with various DNN models

- The DV700 series have the optimal hardware configuration for deep learning inference processing and can perform inference processing using various DNN models such as object detection, semantic segmentation, skeleton estimation, and distance estimation.

- Examples of compatible models: MobileNet, Yolo v3, SegNet, PoseNet

- Provide development environment (SDK / Tool) that facilitates AI application development

- The DV700 series provide development environment (SDK / Tool) accompanying the IP core. The development environment (SDK / Tool) supports the standard AI development framework (Caffe、Keras、TensorFlow), customers can easily perform AI inference processing with the DV700 series by preparing a model that supports the AI development framework.

- * Please refer to GitHub for details of development environment (SDK / Tool).

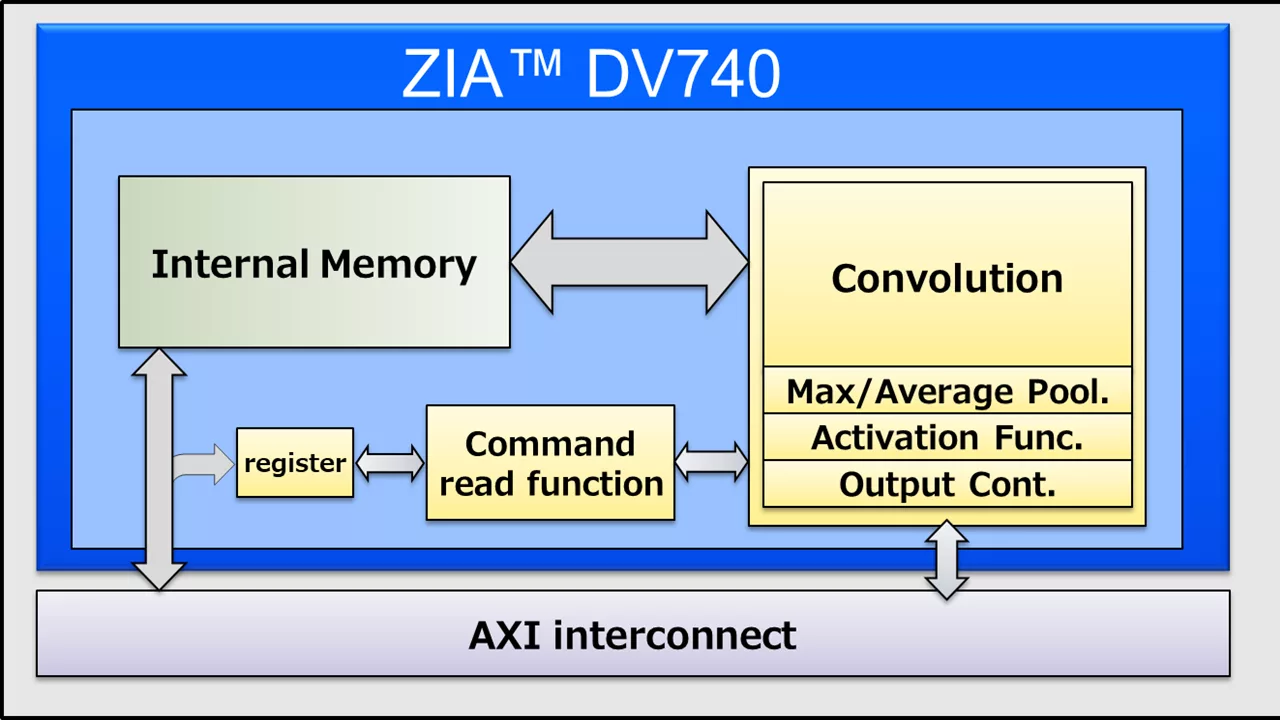

Block Diagram

Benefits

- Up to 1kMAC (2TOPS @1GHz)

- Replaced Processor with optimized controller

- High bandwithd on-chip-RAM (512KB 〜 4MB)

- 8bit weight compression

- Framework

- Caffe 1.x、Keras 2.x

- TensorFlow 1.15

- ONNX format support

Applications

- Automotive, Surveillance Camera, Drone, Wearable, Medical, Robot, Smartphones, Tablets, TV, Gaming, Multifunction Printers, Digital Cameras and More

What’s Included?

- Synthesisable RTL

- SDK/Tools

- OS Support: Linux, RTOS

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about Edge AI Accelerator IP core

Using edge AI processors to boost embedded AI performance

The Industry’s First USB4 Device IP Certification Will Speed Innovation and Edge AI Enablement

Accelerating Your Development: Simplify SoC I/O with a Single Multi-Protocol SerDes IP

IoT Was Interesting, But Follow the Money to AI Chips

Designing Energy-Efficient AI Accelerators for Data Centers and the Intelligent Edge

Frequently asked questions about Edge AI Accelerator IP cores

What is AI inference processor IP?

AI inference processor IP is a Edge AI Accelerator IP core from Digital Media Professionals Inc. (DMP Inc.) listed on Semi IP Hub.

How should engineers evaluate this Edge AI Accelerator?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Edge AI Accelerator IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.