Parallel Processing Unit

Flow's Parallel Processing Unit solves one of computing's most fundamental dilemmas - parallel processing.

Overview

Flow's Parallel Processing Unit solves one of computing's most fundamental dilemmas - parallel processing.

The Parallel Processing Unit (PPU) is an IP block that integrates tightly with the CPU on the same silicon. It is designed to be highly configurable to specific meet requirements of numerous use cases.

Customization options include:

- Number of cores in PPU (4, 16, 64, 256, etc.)

- Type and number of functional units (such as ALUs, FPUs, MUs, GUs, NUs)

- Size of on-chip memory resources (caches, buffers, scratchpads)

- Instruction set modifications tailored to complement the CPU’s instruction set extensions

CPU modifications are minimal, involving the integration of the PPU interface into the instruction set and an update to the number of CPU cores in to unlock new levels of performance.

Our parametric design enables extensive customization, including the number of PPU cores, the variety and number of functional units, and the size of on-chip memory resources. Your performance enhancement scales with the number of PPU cores. A 16-core PPU is ideal for small devices like smart watches; a 64-core PPU is well-suited for smartphones and PCs; and a 256-core PPU is excels in high-demand environments such as AI, cloud, and edge computing servers.

Core benefits of Flow's technology

100x performance boost

- Flow's innovative Parallel Processing Unit (PPU) enhances CPU performance by up to 100 times, ushering in the era of SuperCPUs.

- Designed for full backward compatibility, the PPU boosts the performance of existing software and applications after recompilation. The more parallel the functionality, the greater the boost in performance.

- Flow's technology even enhances the entire computing ecosystem. While the CPU gains direct benefits, ancillary components—matrix units, vector units, NPUs, and GPUs—also see improved performance through the boosted CPU capabilities, all thanks to the PPU.

2X faster legacy software and applications

- Flow’s PPU enhances legacy code without altering the original application and greater performance gains when paired with recompiled operating system or programming system libraries.

- The result? Significant speed improvements across a wide range of applications, especially those that exhibit parallelism but are constrained by traditional thread-based processing. Our PPU unlocks the full potential of these applications, delivering significant performance gains where previous architectures fell short.

Parametric design

- The configurable, parametric design of the PPU allows it to adapt to diverse uses. Everything can be tailored to meet specific requirements of multiple use cases. And we do mean everything. The number of PPU cores—4, 16, 64, 256, or more— and the type and number of functional units, such as ALUs, FPUs, MUs, GUs, and NUs. Even the size of on-chip memory resources—caches, buffers, and scratchpads—can be tailored to suit specific requirements.

- The performance scalability is directly linked to the number of PPU cores. A PPU with 4 cores is ideal for small devices like smart watches, a 16-core PPU is perfect for smartphones, and a 64-core PPU delivers excellent performance for PCs. For servers, a PPU with 256 cores is recommended, enabling them to handle the most demanding computing tasks with ease.

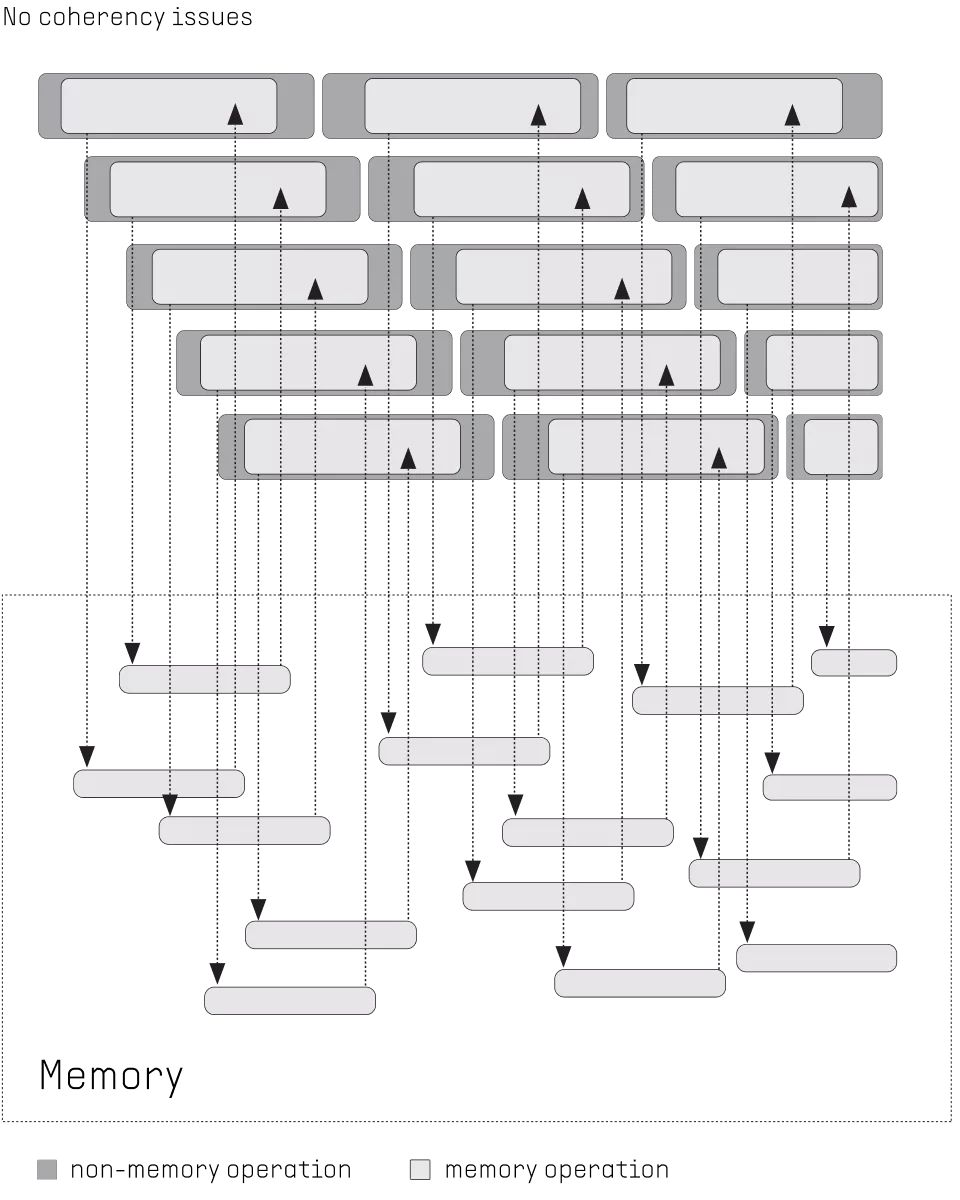

Block Diagram

Benefits

- Flow technology is fully backwards compatible with all existing legacy software and applications. The PPU's compiler automatically recognizes parallel parts of the code and executes those in PPU cores.

- What’s more, Flow is developing an AI tool to help application and software developers identify parallel parts of the code and to propose methods of streamlining those for maximum performance.

Specifications

Identity

Files

Note: some files may require an NDA depending on provider policy.

Provider

Learn more about Vector Processor IP core

MultiVic: A Time-Predictable RISC-V Multi-Core Processor Optimized for Neural Network Inference

Scalable IP Core of Vector Stream Cipher

Integrating eFPGA for Hybrid Signal Processing Architectures

FeNN-DMA: A RISC-V SoC for SNN acceleration

Design guidelines for embedded real time face detection application

Frequently asked questions about Vector Processor IP cores

What is Parallel Processing Unit?

Parallel Processing Unit is a Vector Processor IP core from Flow technology listed on Semi IP Hub.

How should engineers evaluate this Vector Processor?

Engineers should review the overview, key features, supported foundries and nodes, maturity, deliverables, and provider information before shortlisting this Vector Processor IP.

Can this semiconductor IP be compared with similar products?

Yes. Buyers can compare this product with similar semiconductor IP cores or IP families based on category, provider, process options, and structured technical specifications.